Nexelia Academy · Official Revision Notes

Complete A-Level revision notes · 20 chapters

This chapter covers how computers represent information using binary, denary, and hexadecimal number systems, including binary arithmetic. It also explores character encoding, multimedia representation for images and sound, and the essential techniques of file compression.

Binary — Base two number system based on the values 0 and 1 only.

Computers use binary because their internal components, like switches, can only be in one of two states: ON (1) or OFF (0). This fundamental system underpins all digital data representation, much like a light switch that can only be on or off.

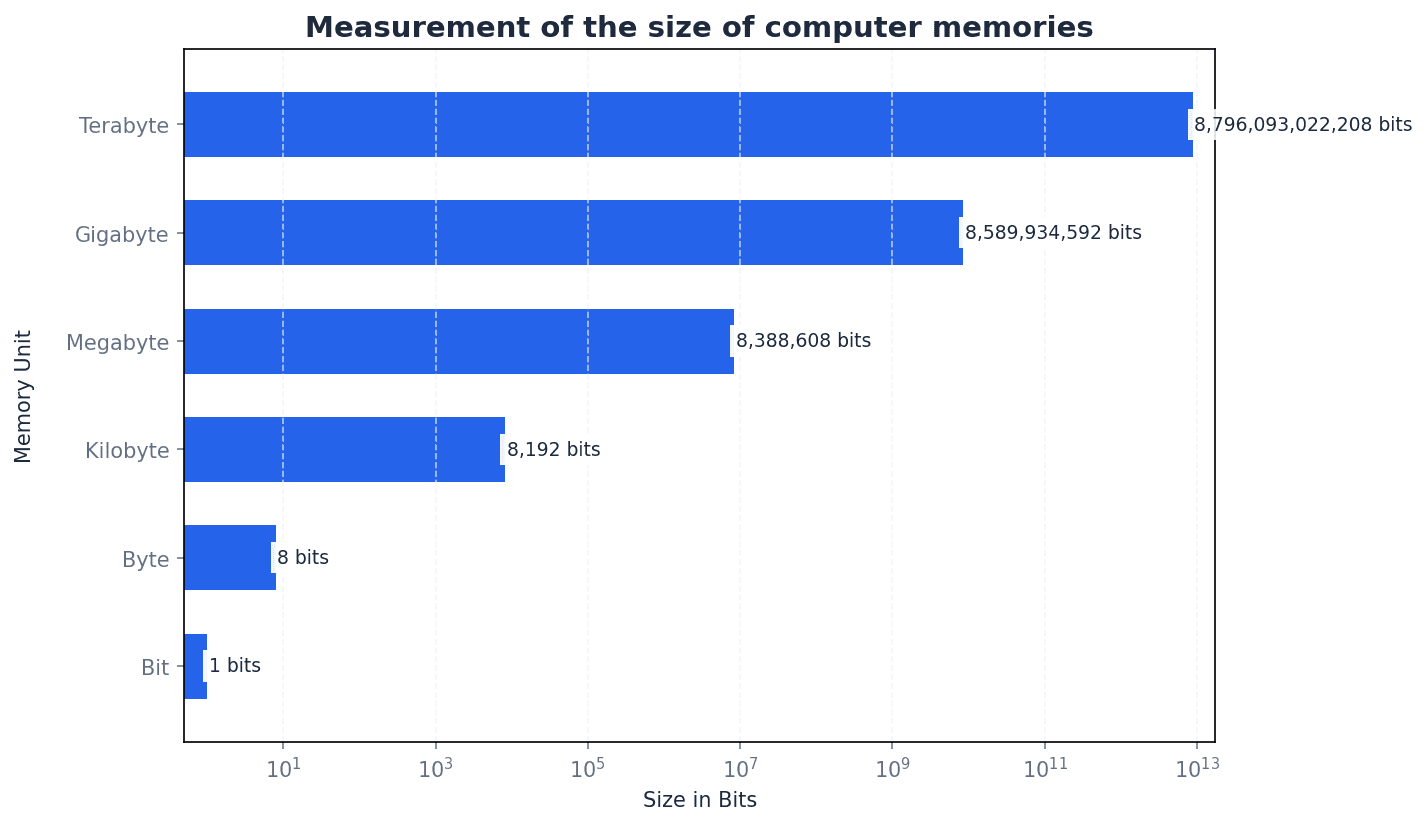

Bit — Abbreviation for binary digit.

A bit is the smallest unit of data in a computer, representing a single 0 or 1. Multiple bits are combined to represent more complex information, similar to how many coins together can represent a larger number, with each coin showing only heads or tails.

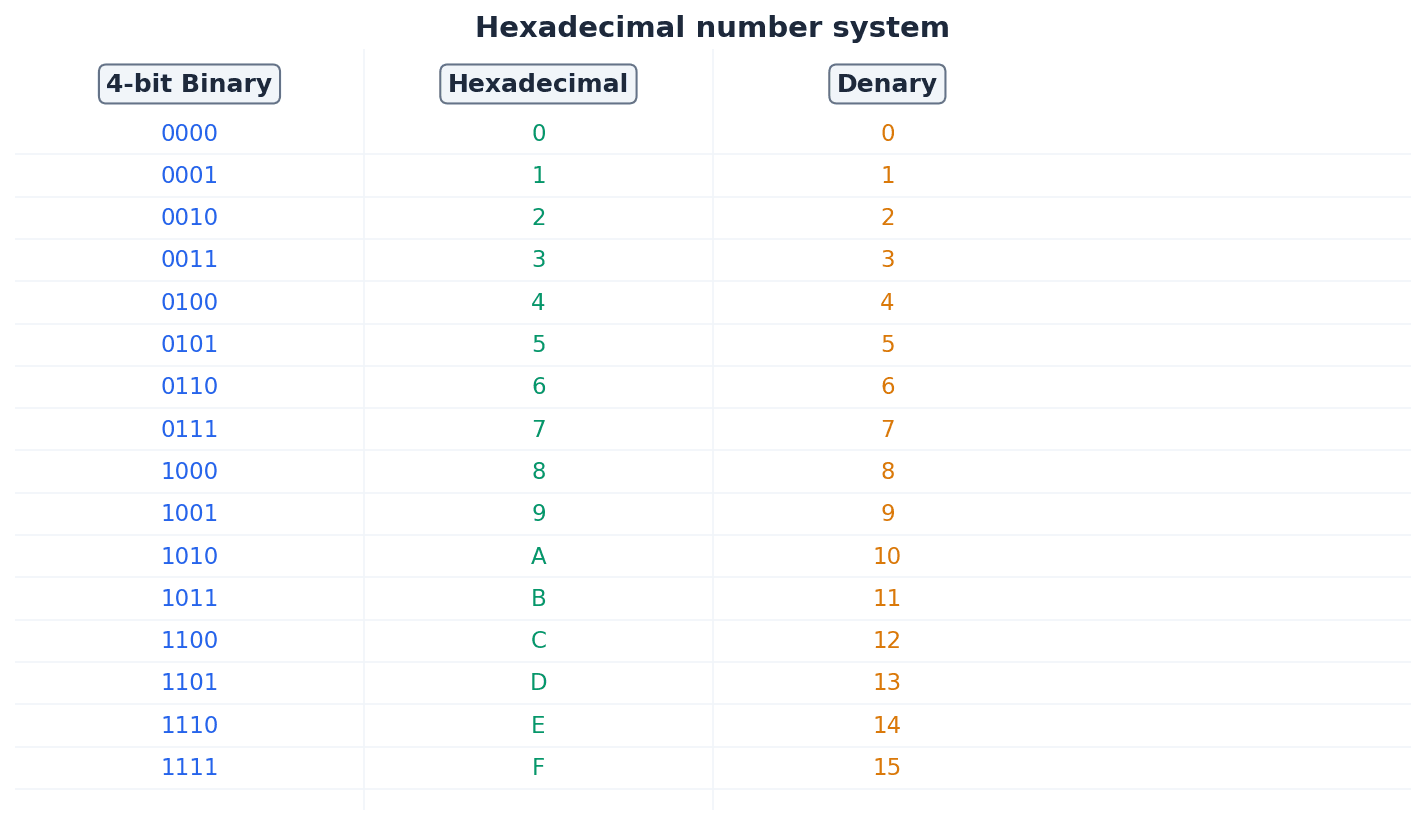

Hexadecimal — A number system based on the value 16 (uses the denary digits 0 to 9 and the letters A to F).

Hexadecimal is a base-16 system that provides a more compact and human-readable representation of binary numbers, as each hexadecimal digit corresponds to four binary bits. It is often used in computing for memory addresses and colour codes, acting as a shorthand for long strings of 0s and 1s.

Binary-coded decimal (BCD) — Number system that uses 4 bits to represent each denary digit.

BCD represents each denary digit (0-9) with its own 4-bit binary code. This system is particularly useful for applications requiring exact decimal representation, such as financial calculations, where rounding errors from pure binary floating-point numbers are unacceptable. It's like converting each digit of a number separately into its own 4-bit binary code.

Students often think BCD is the same as converting a denary number to binary, but actually BCD encodes each denary digit individually, leading to a different binary string.

Computers fundamentally operate using binary, but denary (base 10) is used by humans, and hexadecimal (base 16) offers a more compact representation of binary data. Conversion between these systems is crucial. For instance, converting binary to hexadecimal involves splitting the binary number into 4-bit groups and converting each group to its hexadecimal equivalent. Conversely, converting hexadecimal to binary involves converting each hexadecimal digit into its 4-bit binary code.

Practice conversions between all three number systems (binary, denary, hexadecimal) until they are quick and accurate, as these are foundational marks.

One’s complement — Each binary digit in a number is reversed to allow both negative and positive numbers to be represented.

To find the one's complement of a binary number, all 0s become 1s and all 1s become 0s. This method is one way to represent negative numbers, similar to flipping a switch for every light in a room to reverse its state.

Two’s complement — Each binary digit is reversed and 1 is added in right-most position to produce another method of representing positive and negative numbers.

Two's complement is widely used in computers for representing signed integers because it simplifies arithmetic operations and avoids having two representations for zero. It's like finding the 'opposite' of a number, but with an extra step of adding one to make the arithmetic work seamlessly.

Students often confuse one's complement with two's complement, especially the 'add 1' step for two's complement. Remember that two's complement involves inverting bits THEN adding 1.

Sign and magnitude — Binary number system where left-most bit is used to represent the sign (0 = + and 1 = –); the remaining bits represent the binary value.

In sign and magnitude, the most significant bit indicates whether the number is positive or negative, while the rest of the bits represent the absolute value. This method is intuitive, like writing a plus or minus sign before a number, but complicates arithmetic operations.

Binary addition follows similar rules to denary addition, with carries occurring when the sum of bits is 2 or more. For binary subtraction, the two's complement method is commonly used. This involves converting the subtrahend (the number being subtracted) into its two's complement and then adding it to the minuend. Any overflow bit generated during this addition is typically ignored for the final result.

When performing binary subtraction, always convert the subtrahend to its two's complement and then add; this is a common exam technique.

Memory dump — Contents of a computer memory output to screen or printer.

A memory dump displays the raw data stored in a computer's memory, typically in hexadecimal format, which is useful for debugging software and diagnosing system errors. It's like taking a snapshot of everything currently stored in the computer's active memory, presented in a format that programmers can read.

Character set — A list of characters that have been defined by computer hardware and software.

A character set is a collection of characters that a computer system can recognize and display, each assigned a unique numerical code. It is essential for computers to process and display human-readable text, acting as the complete alphabet and symbol list a computer 'knows'.

ASCII code — Coding system for all the characters on a keyboard and control codes.

ASCII (American Standard Code for Information Interchange) is a 7-bit character encoding standard that represents text characters in computers. It includes uppercase and lowercase letters, numbers, punctuation, and control characters, forming the basis for text communication, much like a universal dictionary where every character has a specific numerical code.

Unicode — Coding system which represents all the languages of the world (first 128 characters are the same as ASCII code).

Unicode is a universal character encoding standard designed to represent text from all of the world's writing systems. It uses up to four bytes per character, allowing for a vast number of characters compared to ASCII, making it suitable for global applications. It's like an expanded, global dictionary that includes every character from every language.

Students often think ASCII can represent all characters in the world, but actually it is limited to 128 (or 256 for extended ASCII) characters, primarily English-based. Unicode is required for comprehensive character sets.

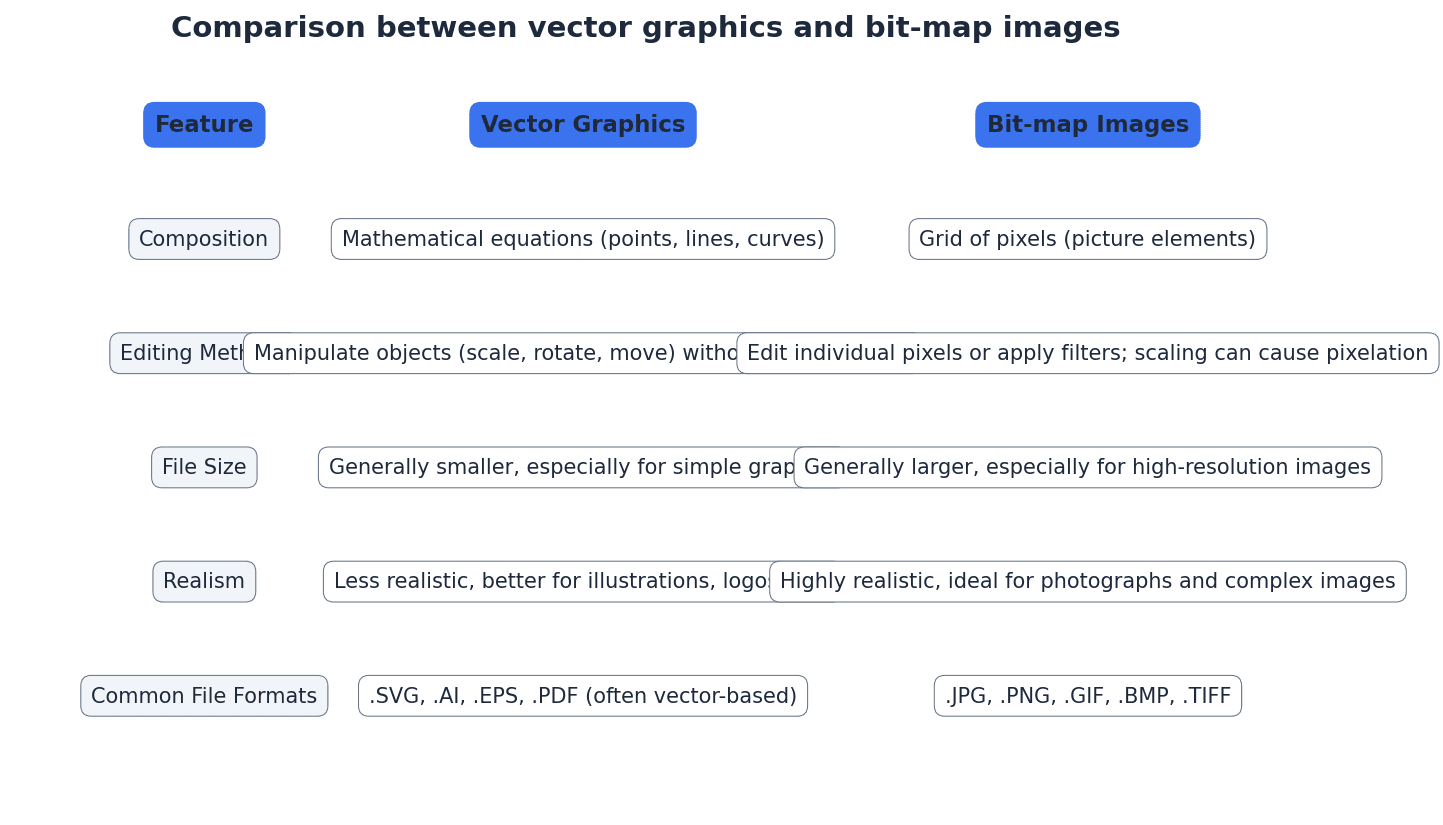

Bit-map image — System that uses pixels to make up an image.

A bit-map image, also known as a raster image, stores an image as a grid of individual pixels. Each pixel's colour information is stored, making them suitable for photographs and realistic images, but they can become pixelated when scaled up. This is like drawing a picture by filling in tiny squares on a grid.

Pixel — Smallest picture element that makes up an image.

A pixel is a single point in a raster image or on a display screen. Each pixel contains colour information, and collectively, millions of pixels form a complete image, much like a single tiny coloured tile in a mosaic.

Colour depth — Number of bits used to represent the colours in a pixel, e.g. 8 bit colour depth can represent 2^8 = 256 colours.

Colour depth determines the number of distinct colours that can be represented by each pixel in an image. A higher colour depth (more bits per pixel) allows for a wider range of colours and a more realistic image, but also increases file size. It's like having a box of crayons; a higher colour depth means more crayons to draw with.

Bit depth — Number of bits used to represent the smallest unit in, for example, a sound or image file – the larger the bit depth, the better the quality of the sound or colour image.

Bit depth is a general term referring to the number of bits used to store information about a single sample (e.g., a pixel's colour or a sound's amplitude). In images, it's synonymous with colour depth; in sound, it's sampling resolution. Think of it as the precision of measurement.

Number of colours from bit depth

Used for calculating the number of possible colours in an image given its colour depth, or the number of amplitude values for sound given its sampling resolution.

Image resolution — Number of pixels that make up an image, for example, an image could contain 4096 × 3192 pixels (12 738 656 pixels in total).

Image resolution specifies the total number of pixels in an image, typically expressed as width × height. Higher image resolution means more detail and sharpness, but also results in larger file sizes. It's like the total number of tiny squares on your drawing grid.

Screen resolution — Number of horizontal and vertical pixels that make up a screen display.

Screen resolution refers to the fixed number of pixels a display device can show. If an image's resolution exceeds the screen's resolution, the image may be scaled down or cropped, potentially affecting its displayed quality. This is like the fixed number of tiny lights on your TV screen.

Students often confuse image resolution (total pixels in an image) with screen resolution (pixels on a display device). Remember that image resolution is an intrinsic property of the image file, while screen resolution is a property of the display device.

Resolution — Number of pixels per column and per row on a monitor or television screen.

Resolution, in a general sense, refers to the detail level of an image or display. For screens, it's the pixel dimensions; for images, it's the total pixel count. Higher resolution generally means more detail, like the clarity of a photograph.

Pixel density — Number of pixels per square centimetre.

Pixel density, often measured in pixels per inch (ppi), indicates how many pixels are packed into a given physical area. Higher pixel density results in sharper, clearer images on a display, especially when viewed up close. Imagine fitting more tiny lights into the same size area on a screen.

Bit-map image file size (bits)

This formula calculates the raw uncompressed file size in bits. To get bytes, divide by 8. To get MB or MiB, further division is needed.

Pixel density (ppi)

This formula calculates the pixel density in pixels per inch (ppi) for a given screen resolution and diagonal screen size.

For image file size calculations, remember the formula: `File size (bits) = Image width × Image height × Bit depth`, and convert to bytes/KB/MB if requested.

Students often assume bit-map images can be scaled indefinitely without quality loss, but actually they lose sharpness and become pixelated when enlarged because the number of pixels remains fixed.

Vector graphics — Images that use 2D points to describe lines and curves and their properties that are grouped to form geometric shapes.

Vector graphics are created using mathematical descriptions of geometric shapes (points, lines, curves, polygons) rather than a grid of pixels. This allows them to be scaled to any size without loss of quality, making them ideal for logos and illustrations. It's like giving a computer instructions to draw shapes using mathematical formulas.

Bit-map images are composed of pixels and are suitable for photographs and realistic images, but they pixelate when scaled. Vector graphics, on the other hand, are defined by mathematical descriptions of shapes, allowing them to be scaled infinitely without any loss of quality. This makes vector graphics ideal for logos, illustrations, and designs that need to be resized frequently.

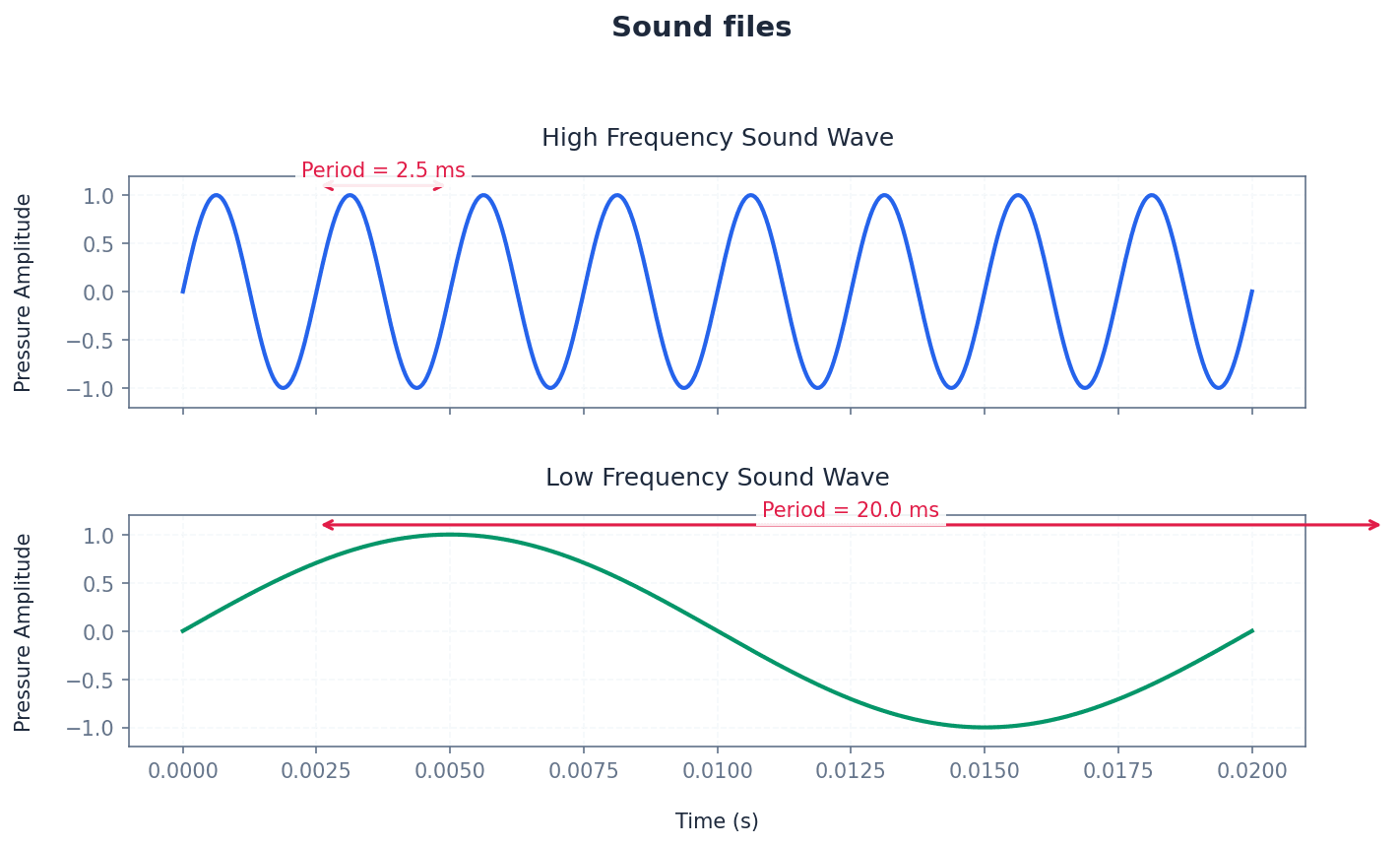

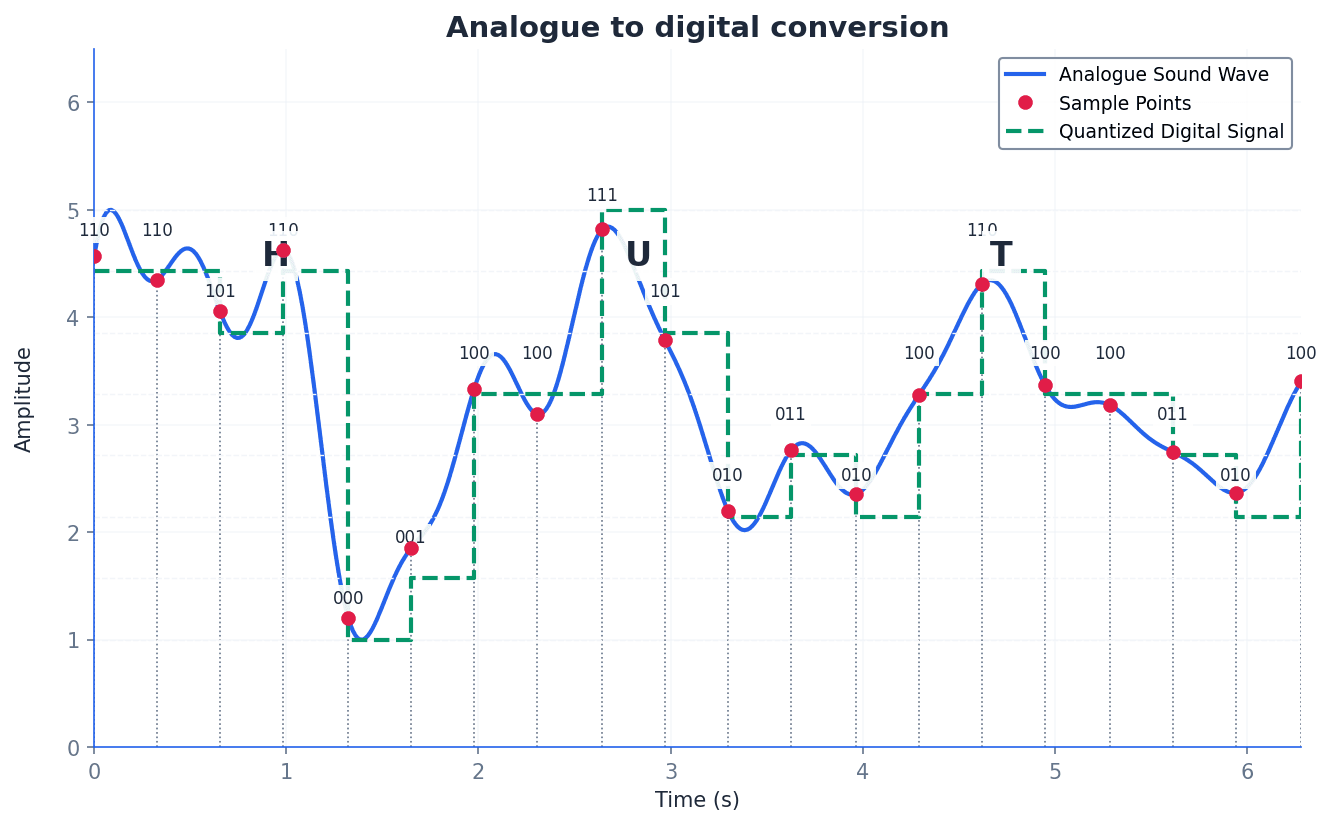

Sampling resolution — Number of bits used to represent sound amplitude (also known as bit depth).

Sampling resolution determines the precision with which the amplitude of a sound wave is measured and stored during digitisation. A higher sampling resolution (more bits) captures more subtle variations in loudness, resulting in better sound quality but larger file sizes. It's like using a more precise ruler to measure the height of a sound wave.

Sampling rate — Number of sound samples taken per second.

Sampling rate determines how frequently an analogue sound wave is measured and converted into digital data. A higher sampling rate captures more points along the wave, resulting in a more accurate digital representation of the original sound and better quality, but also a larger file size. Imagine taking more snapshots of a moving object per second.

Students often confuse sampling rate (how often samples are taken) with sampling resolution (the precision of each sample's amplitude) for sound files. Remember that rate is about frequency, and resolution is about precision.

Frame rate — Number of video frames that make up a video per second.

Frame rate determines how many still images (frames) are displayed sequentially per second to create the illusion of motion in a video. A higher frame rate results in smoother, more fluid motion but increases the video file size. It's like flipping through a stack of drawings; more drawings per second make the animation smoother.

Lossless file compression — File compression method where the original file can be restored following decompression.

Lossless compression algorithms reduce file size by identifying and encoding redundant data without discarding any information. This ensures that when the file is decompressed, it is an exact replica of the original, making it suitable for critical data like text documents or spreadsheets. It's like packing a suitcase more efficiently by folding clothes neatly.

Run length encoding (RLE) — A lossless file compression technique used to reduce text and photo files in particular.

Run-length encoding (RLE) is a lossless compression method that replaces sequences of identical data values (runs) with a single data value and a count. For example, 'AAAAABBC' becomes 'A5B2C1'. This is particularly effective for images with large areas of uniform colour or repetitive text.

Lossy file compression — File compression method where parts of the original file cannot be recovered during decompression, so some of the original detail is lost.

Lossy compression algorithms achieve significant file size reduction by permanently discarding data deemed less important or imperceptible to human senses. This is commonly used for multimedia files like images (JPEG) and audio (MP3) where some quality degradation is acceptable for smaller file sizes. It's like summarising a long book, keeping main points but leaving out details.

JPEG — Joint Photographic Expert Group – a form of lossy file compression based on the inability of the eye to spot certain colour changes and hues.

JPEG is a widely used lossy compression standard for digital images, particularly photographs. It achieves high compression ratios by discarding visual information that the human eye is less sensitive to, making it ideal for web images and storage where some quality compromise is acceptable. It's like a smart artist who knows which details in a painting you won't notice anyway.

MP3/MP4 files — File compression method used for music and multimedia files.

MP3 (MPEG-3) is a lossy audio compression format that significantly reduces the size of audio files by removing sounds outside human hearing range or quieter sounds masked by louder ones. MP4 (MPEG-4) is a broader container format that can store audio, video, images, and subtitles, also often using lossy compression for its components. MP3 is like a smart DJ who removes sounds you can't hear, while MP4 is a multimedia box for various compressed content.

Audio compression — Method used to reduce the size of a sound file using perceptual music shaping.

Audio compression techniques reduce the file size of sound recordings by identifying and removing redundant or perceptually irrelevant information. Perceptual music shaping is a key aspect, focusing on what the human ear can and cannot detect. It's like editing a recording to remove background noise and sounds too high or low for human hearing.

Perceptual music shaping — Method where sounds outside the normal range of hearing of humans, for example, are eliminated from the music file during compression.

Perceptual music shaping is a technique used in lossy audio compression (like MP3) that leverages psychoacoustics. It removes frequencies beyond human hearing and quieter sounds that are masked by louder ones, significantly reducing file size with minimal perceived quality loss. Imagine a sound engineer who knows exactly what sounds you can't hear and removes them.

Bit rate — Number of bits per second that can be transmitted over a network. It is a measure of the data transfer rate over a digital telecoms network.

Bit rate, in the context of compressed media, refers to the amount of data (bits) used per second to encode a continuous medium like audio or video. A higher bit rate generally means better quality but a larger file size and higher bandwidth requirement. Think of it as the 'density' of information in a stream.

When asked to 'explain' concepts like compression or sound representation, describe the process and the impact of parameters (e.g., higher sampling rate = better quality, larger file).

Be prepared to compare and contrast bit-map and vector graphics, focusing on their underlying data representation, scalability, and typical uses.

When discussing quality, link higher bit depth directly to better quality (more colours, more accurate sound amplitude) and larger file sizes.

Definitions Bank

Binary

Base two number system based on the values 0 and 1 only.

Bit

Abbreviation for binary digit.

One’s complement

Each binary digit in a number is reversed to allow both negative and positive numbers to be represented.

Two’s complement

Each binary digit is reversed and 1 is added in right-most position to produce another method of representing positive and negative numbers.

Sign and magnitude

Binary number system where left-most bit is used to represent the sign (0 = + and 1 = –); the remaining bits represent the binary value.

+26 more definitions

View all →Common Mistakes

Confusing one's complement with two's complement.

Remember that two's complement involves inverting all bits THEN adding 1 to the right-most bit.

Misunderstanding that BCD encodes each denary digit separately.

BCD represents each denary digit (0-9) with its own 4-bit binary code, rather than converting the entire denary number to a single binary value.

Believing that ASCII can represent all global characters.

ASCII is limited to 128 (or 256 for extended ASCII) characters, primarily English-based. Unicode is necessary for representing characters from all global languages.

+3 more

View all →This chapter explores the foundational concepts of computer networking, covering various network types, architectural models, and essential hardware. It delves into how devices communicate, from local connections to the global internet, and examines the benefits and challenges of connecting systems.

Node — Device connected to a network (it can be a computer, storage device or peripheral device).

A node is any active electronic device attached to a network that can send, receive, or forward information. This broad term includes computers, printers, servers, and other network-enabled hardware, acting as a distinct point that can send or receive traffic.

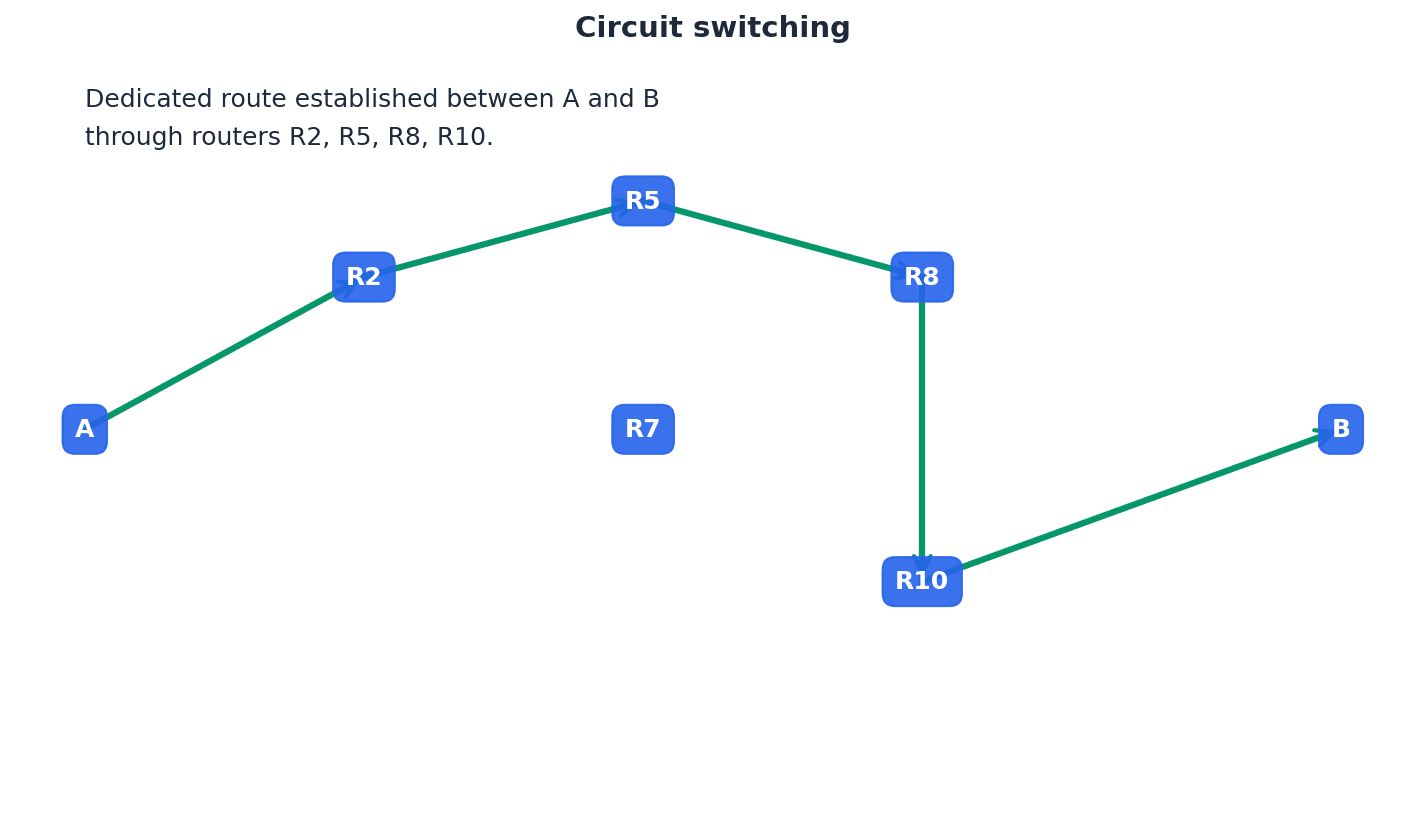

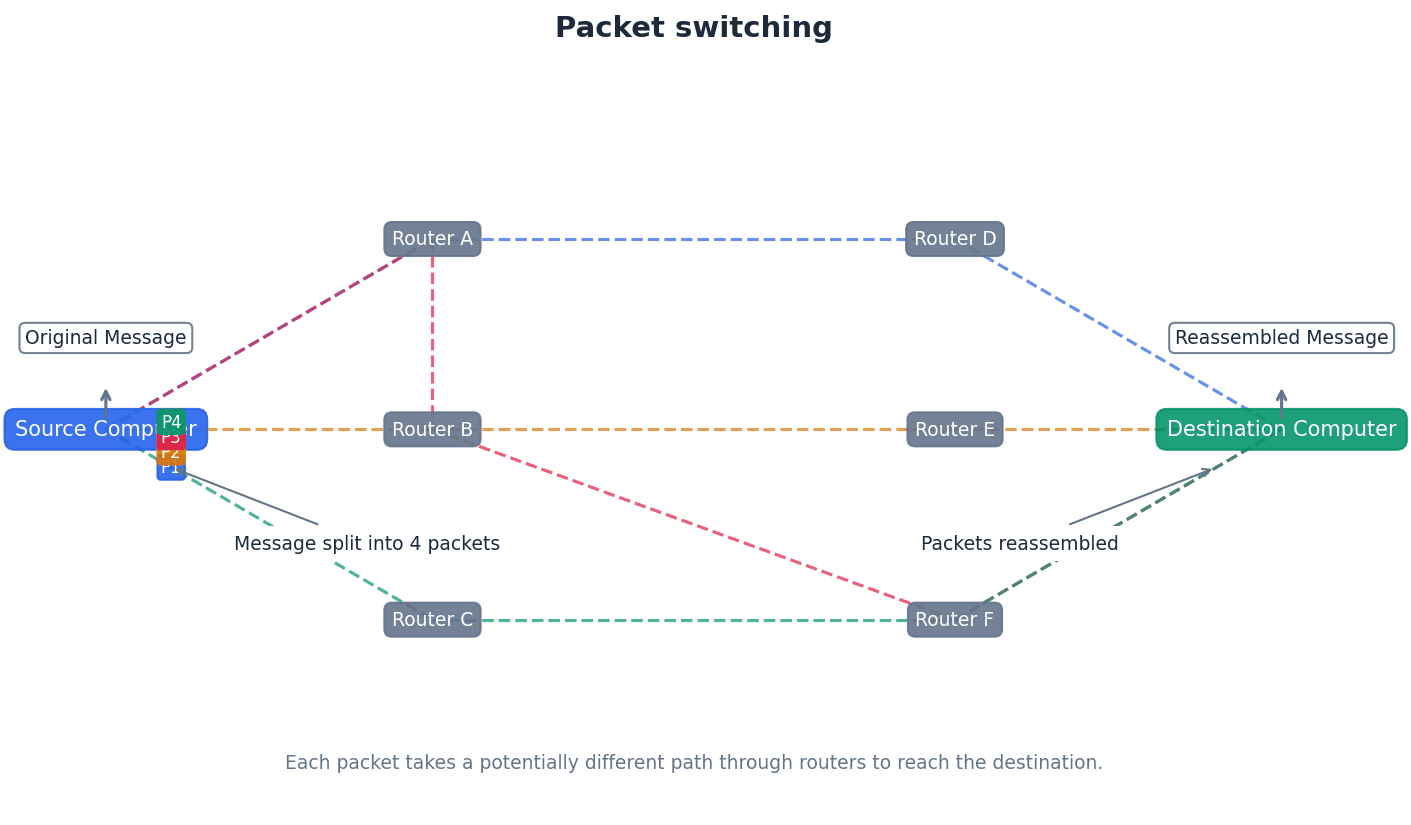

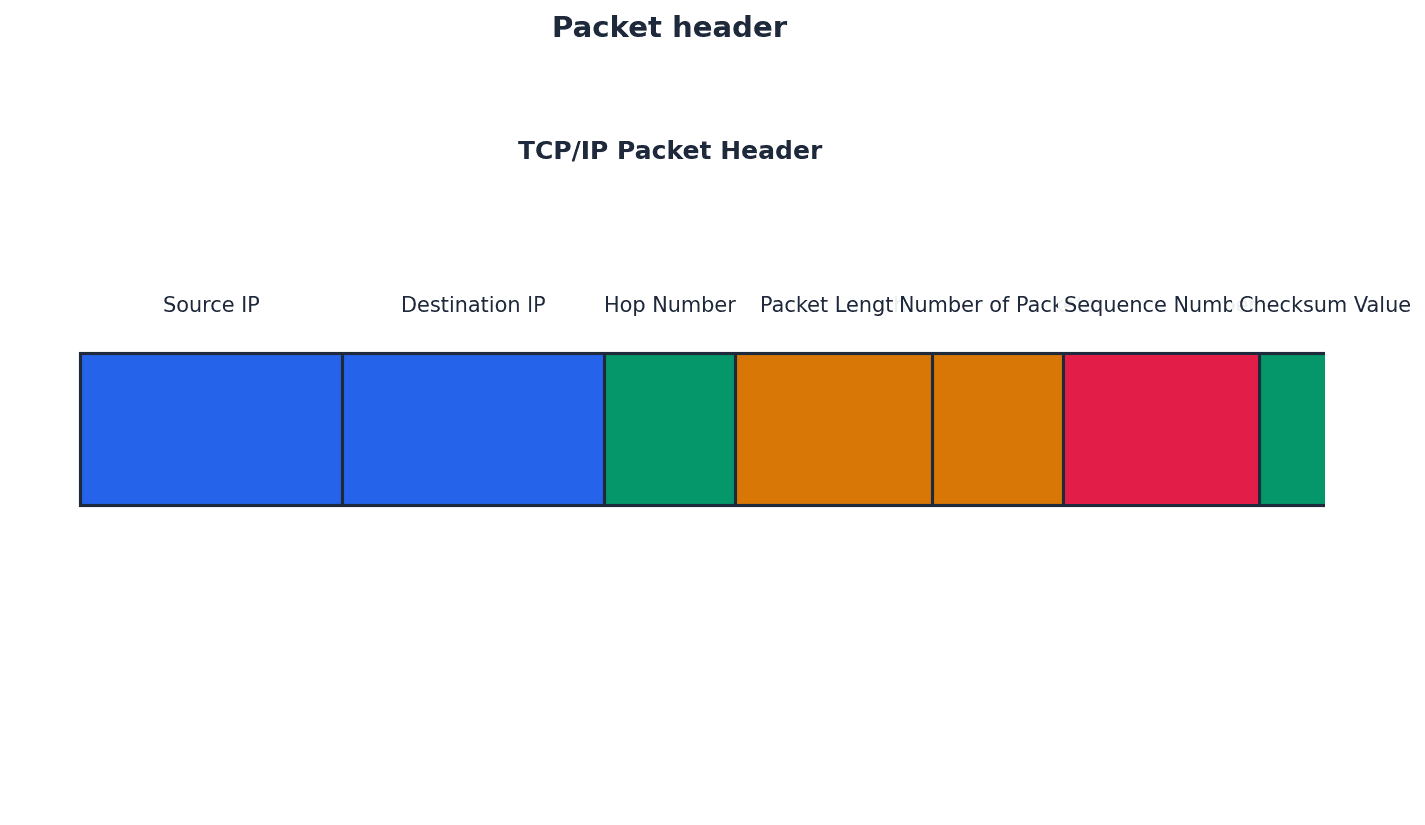

Packet — Message/data sent over a network from node to node (packets include the address of the node sending the packet, the address of the packet recipient and the actual data).

Data transmitted over networks is broken down into smaller units called packets. Each packet contains a portion of the actual data along with control information, such as source and destination addresses, ensuring it reaches the correct recipient and can be reassembled. This is like a small, individually addressed envelope containing a piece of a larger letter.

Students often think data is sent as one continuous stream, but actually it's broken into packets for efficient routing and error handling across networks.

LAN — Local area network, a network covering a small area such as a single building.

LANs connect a number of computers and shared devices, like printers, within a limited geographical space, typically contained within one building or a small campus. They are like the internal communication system within a single office building, allowing employees to share resources easily.

Students often think all networks are the same, but actually LANs are distinct due to their small geographical scope and typically private ownership.

WLAN — Wireless LAN.

WLANs provide wireless network communications over short distances (up to 100 metres) using radio or infrared signals, eliminating the need for physical cables. They rely on wireless access points (WAPs) to connect devices to the wired network, much like a cordless phone system for your computer network.

WAP — (Wireless) access point which allows a device to access a LAN without a wired connection.

WAPs are devices connected to a wired network at fixed locations, enabling wireless devices to connect to the LAN using radio or infrared signals. They receive and transmit data between the wireless and wired network structures, acting like a radio tower for your local network.

Students often think a WAP is a router, but actually a WAP primarily extends a wired network wirelessly, while a router connects different networks and routes traffic.

MAN — Metropolitan area network, a network which is larger than a LAN but smaller than a WAN, which can cover several buildings in a single city, such as a university campus.

MANs connect multiple LANs within a city-wide geographical area, providing connectivity across different buildings or sites within that city. They are restricted in size geographically to, for example, a single city, similar to a city-wide bus system connecting different neighborhoods.

Students often think MANs are just large LANs, but actually they bridge the gap between LANs and WANs by covering a city-sized area, often connecting multiple distinct LANs.

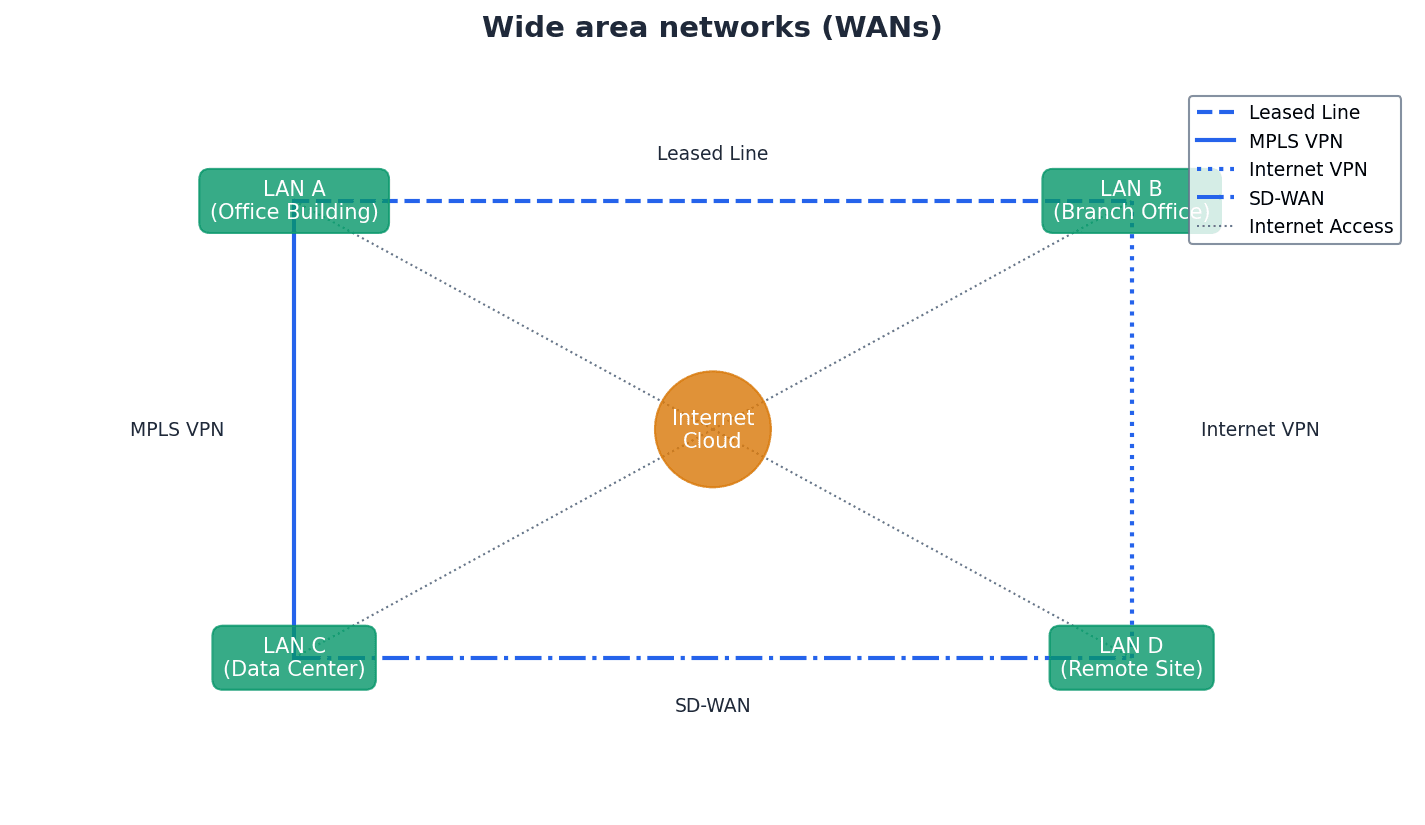

WAN — Wide area network, a network covering a very large geographical area.

WANs typically consist of multiple LANs connected via public communication networks like telephone lines or satellites, spanning countries or continents. They are used for long-distance communication between geographically dispersed locations, much like a global postal service connecting many local post offices.

Students often think the internet is a WAN, but actually the internet is a vast number of decentralised networks and computers with a common point of access, making it intrinsically different from a single WAN.

PAN — Network that is centred around a person or their workspace.

A PAN is a very small network, typically connecting devices in close proximity to an individual, such as a laptop, smartphone, tablet, and printer within a user's house. It's like a personal bubble of connectivity around you, linking your phone, headphones, and smartwatch together.

Students often think PANs are always Bluetooth, but actually while Bluetooth is common, PAN is a broader term for any personal-scale network, wired or wireless.

WPAN — Wireless personal area network. A local wireless network which connects together devices in very close proximity (such as in a user’s house); typical devices would be a laptop, smartphone, tablet and printer.

A WPAN is a wireless network designed for short-range communication between devices centered around an individual's workspace or home. Bluetooth is a common technology used to create WPANs, enabling devices like headphones, phones, and computers to connect wirelessly, like an invisible bubble of connectivity.

Students often think WPANs are just Bluetooth, but actually Bluetooth is a technology used to create a WPAN, which is the broader concept of a personal wireless network.

For network types (LAN, WAN, MAN, PAN), clearly differentiate by geographical scope and typical use cases.

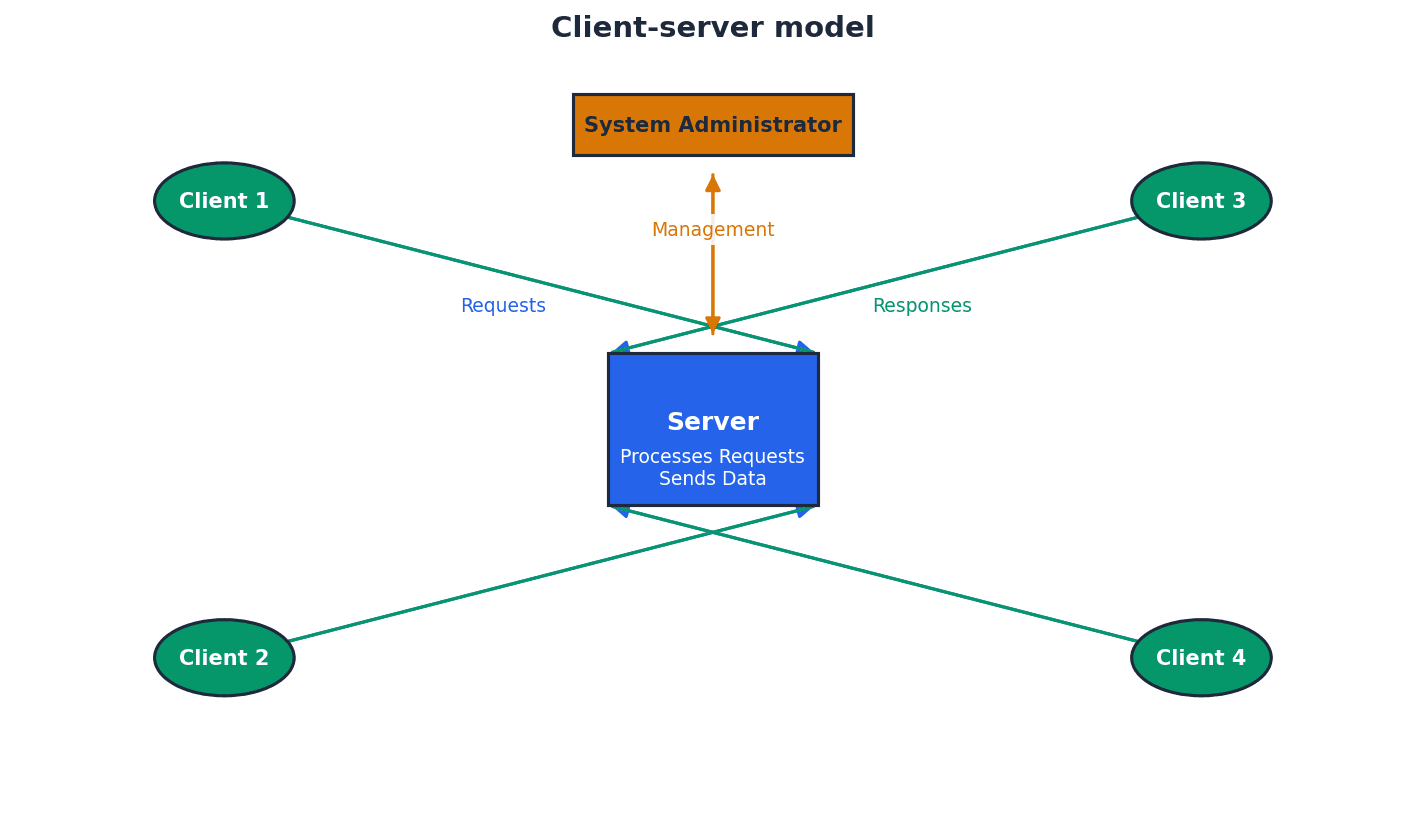

Client-server — Network that uses separate dedicated servers and specific client workstations.

In a client-server model, client computers connect to dedicated servers to access files and resources, with the server managing security, access rights, and central data storage. This model offers greater security and scalability, much like a restaurant where the kitchen (server) prepares food and waiters (clients) deliver it.

Students often think clients and servers are equal, but actually servers have dedicated roles for resource management and security, while clients request and consume those resources.

File server — A server on a network where central files and other data are stored.

File servers enable users logged onto the network to access shared files and information, providing central storage and management of data. This allows for easier data sharing and central backups, similar to a central library where all resources are kept for students to access.

Peer-to-peer — Network in which each node can share its files with all the other nodes.

In a peer-to-peer network, there is no central server; each node acts as both a client and a server, sharing its own data and resources directly with other nodes. This model is typically used for small networks with less stringent security requirements, much like a group of friends sharing files directly from their own laptops.

When comparing client-server and peer-to-peer, discuss advantages and disadvantages for both models, including scalability and security.

Thin client — Device that needs access to the internet for it to work and depends on a more powerful computer for processing.

A thin client, whether hardware or software, has limited local processing power and storage, relying heavily on a central server or powerful computer for most of its functionality. It will not work without a constant connection to the server, much like a remote control for a smart TV that relies entirely on the TV for complex tasks.

Students often confuse thin clients with simply 'cheap computers', overlooking their fundamental dependence on a server for processing.

Thick client — Device which can work both off line and on line and is able to do some processing even if not connected to a network/internet.

A thick client, whether hardware or software, possesses significant local processing power, storage, and an operating system, allowing it to perform tasks independently even when disconnected from a network or server. It can still connect to networks for additional functionality, similar to a fully-equipped laptop that can run programs offline.

When comparing thin and thick clients, highlight the thin client's reliance on a server for processing and its inability to function offline as key distinguishing features.

Network topologies define the physical or logical arrangement of nodes and connections within a network. Different topologies offer varying levels of reliability, performance, and ease of management, each with its own set of advantages and disadvantages regarding fault tolerance and data flow.

Bus network topology — Network using single central cable in which all devices are connected to this cable so data can only travel in one direction and only one device is allowed to transmit at a time.

In a bus topology, all nodes share a single communication line, requiring terminators at each end to prevent signal reflection. While easy to expand and requiring little cabling, a failure in the main cable brings down the entire network, and performance degrades under heavy load. It's like a single-lane road where only one car can travel at a time.

Students often think bus networks are robust, but actually they have a single point of failure (the main cable) which can bring down the entire network.

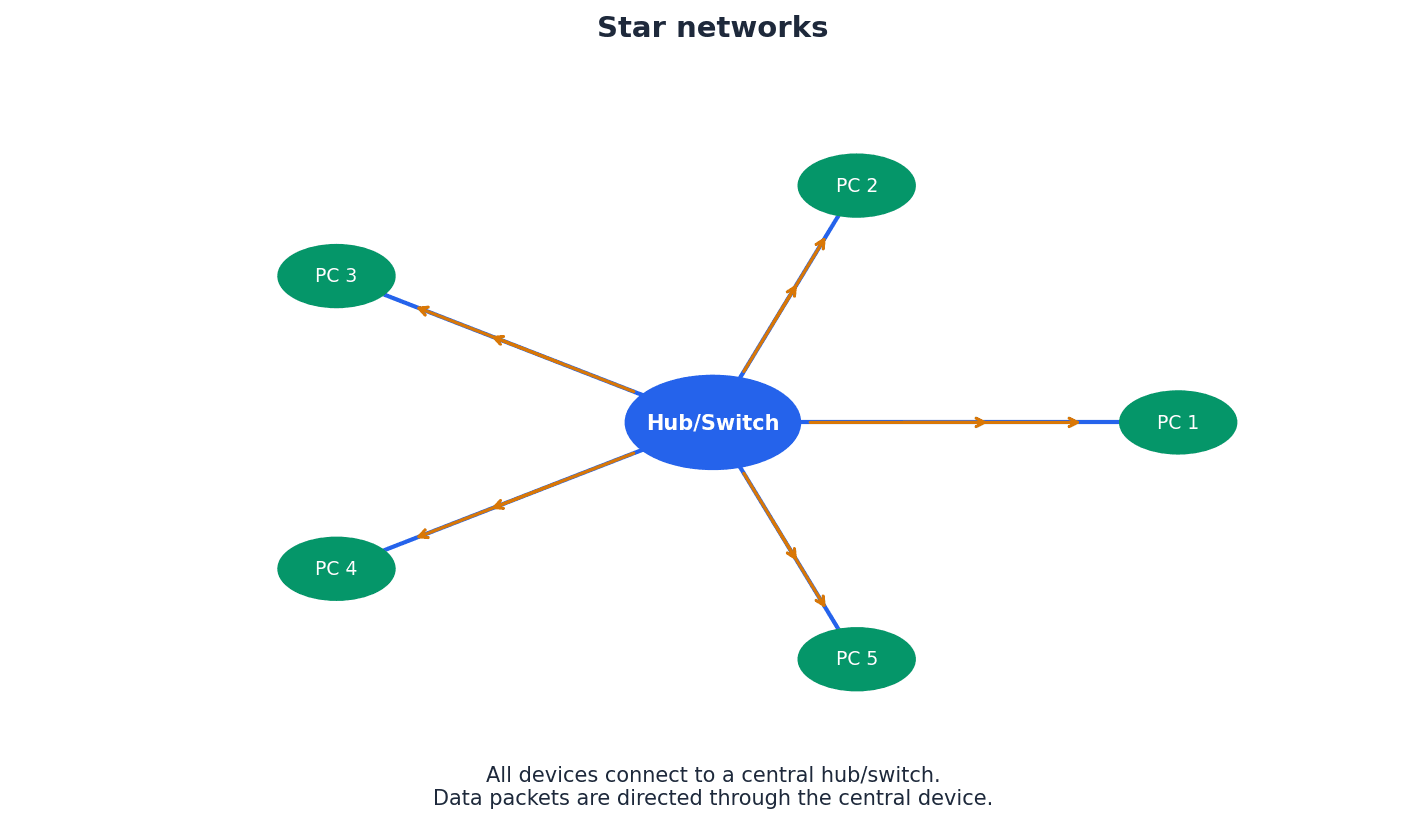

Star network topology — A network that uses a central hub/switch with all devices connected to this central hub/switch so all data packets are directed through this central hub/switch.

In a star topology, each device has a dedicated connection to a central hub or switch. This reduces data collisions, improves security, and makes it easier to identify faults, as a single connection failure only affects one node. It's like a central telephone exchange where every phone has its own direct line to the operator.

Students often think a star network is immune to failure, but actually if the central hub/switch fails, the entire network goes down.

Mesh network topology — Interlinked computers/devices, which use routing logic so data packets are sent from sending stations to receiving stations only by the shortest route.

Mesh networks feature multiple redundant connections between nodes, allowing data to be routed dynamically via the shortest path and re-routed if a node fails. This provides high reliability and security but is complex and expensive to set up, much like a city with many interconnected roads and intelligent GPS systems.

Students often think mesh networks are only for small areas, but actually they are commonly used for large-scale networks like the internet and WANs due to their robustness.

Hybrid network — Network made up of a combination of other network topologies.

A hybrid network combines two or more different topologies (e.g., bus and star) to leverage the advantages of each and accommodate diverse networking needs. While complex to install and maintain, they can handle large traffic volumes and are well-suited for larger, evolving networks, similar to a transportation system using both buses and taxis.

For network topologies, be prepared to draw and label diagrams, and explain the impact of a single point of failure for each.

Cloud computing involves storing and accessing data and programs over the internet instead of directly on your computer's hard drive. This model offers flexibility and scalability, allowing users to access resources from anywhere with an internet connection, but also introduces considerations regarding data security and control.

Cloud storage — Method of data storage where data is stored on off-site servers.

Cloud storage involves storing data on a network of remote servers, rather than directly on a user's device. This data is often replicated across multiple servers (data redundancy) to ensure availability and reliability, managed by a hosting company. It's like keeping your important documents in a secure, professional vault with multiple copies.

Data redundancy — Situation in which the same data is stored on several servers in case of maintenance or repair.

Data redundancy is a strategy used in cloud storage and other data management systems to ensure data availability and prevent loss. By storing multiple copies of the same data on different servers, the system can continue to operate even if one server fails or requires maintenance. This is like having multiple spare tires or copies of a key document.

Students often believe that data redundancy is wasteful, rather than a crucial strategy for data availability and disaster recovery.

When discussing cloud computing, ensure you cover both pros and cons, including aspects of data security and potential data loss.

Networks can be established using either wired or wireless technologies, each with distinct characteristics regarding speed, range, security, and cost. Wired networks typically offer higher speeds and greater security, while wireless networks provide convenience and mobility.

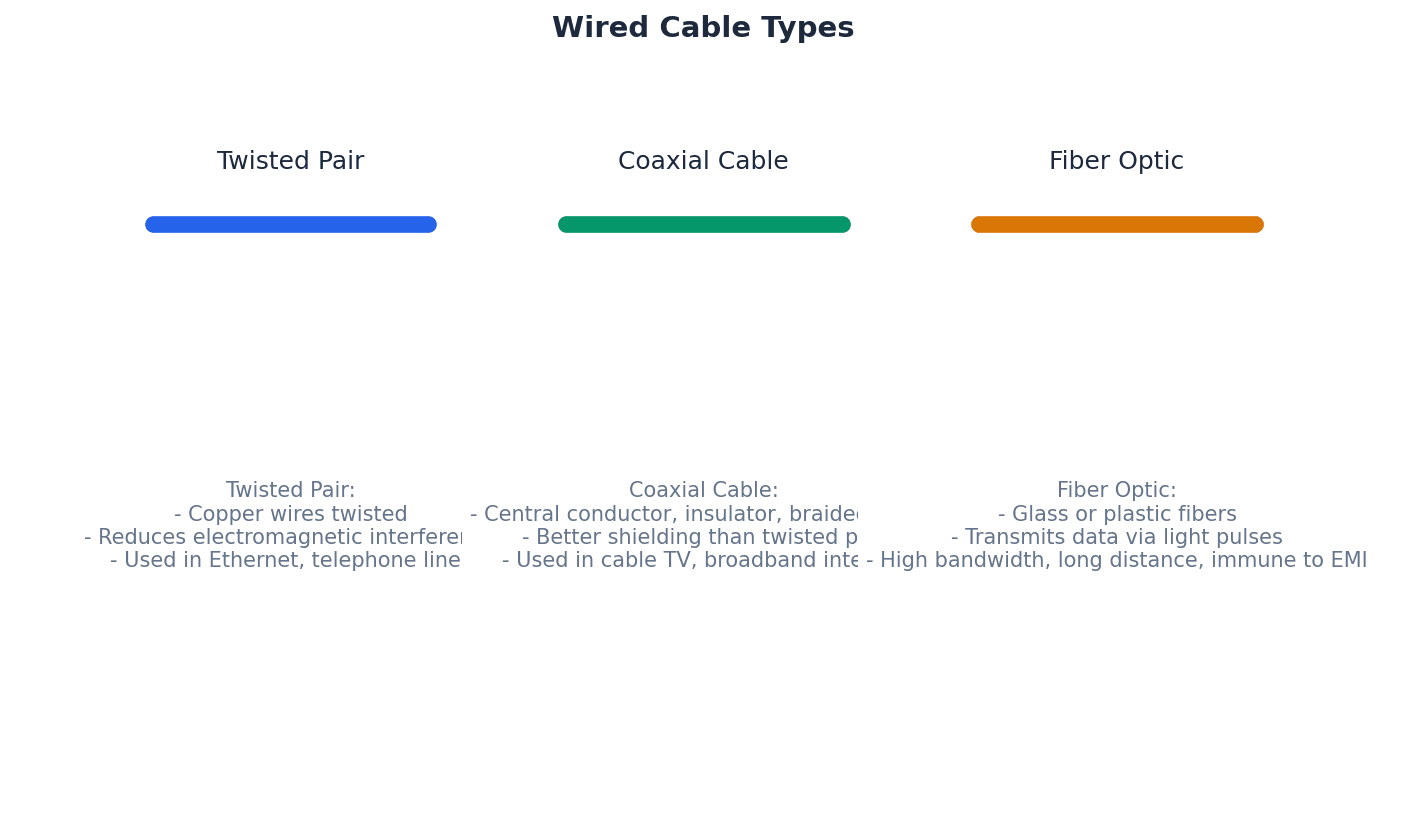

Twisted pair cable — Type of cable in which two wires of a single circuit are twisted together.

Twisted pair cables are the most common type used in LANs, consisting of multiple twisted pairs within a single cable. The twisting helps reduce electromagnetic interference, but they have the lowest data transfer rate and are the cheapest option among wired cables. This is like two people walking arm-in-arm to avoid bumping into others.

Coaxial cable — Cable made up of central copper core, insulation, copper mesh and outer insulation.

Coaxial cable is a type of electrical cable consisting of a central conductor surrounded by an insulating layer, a metallic shield, and an outer insulating jacket. This design helps to minimize signal loss and electromagnetic interference, making it suitable for higher bandwidth applications than twisted pair.

Fibre optic cable — Cable made up of glass fibre wires which use pulses of light (rather than electricity) to transmit data.

Fibre optic cables transmit data using pulses of light through thin strands of glass or plastic. They offer significantly higher bandwidth, greater distances, and immunity to electromagnetic interference compared to copper cables, making them ideal for high-speed, long-distance communication.

Wi-Fi — Wireless connectivity that uses radio waves, microwaves.

Wi-Fi implements IEEE 802.11 protocols and uses spread spectrum technology to offer fast data transfer rates, good range (up to 100 metres), and better security than Bluetooth, making it suitable for full-scale networks and internet access. It's like a powerful, high-speed radio station for your devices.

Students often think Wi-Fi and Bluetooth are the same, but actually Wi-Fi offers much faster speeds, greater range, and is designed for network connectivity, while Bluetooth is for short-range device pairing.

Bluetooth — Wireless connectivity that uses radio waves in the 2.45 GHz frequency band.

Bluetooth uses spread spectrum frequency hopping across 79 channels to create secure wireless personal area networks (WPANs) for short-range data transfer (less than 30 metres). It's ideal for low-bandwidth applications where speed is not critical, like a short-range, private walkie-talkie system for your devices.

Students often think Bluetooth is for internet access, but actually it's primarily for connecting devices directly to each other over short distances, not for general internet connectivity.

Spread spectrum technology — Wideband radio frequency with a range of 30 to 50 metres.

This technology is used in wireless communications like Wi-Fi and Bluetooth to spread a signal over a wider frequency band, making it more resistant to interference and eavesdropping. It often involves frequency hopping to avoid busy channels, similar to having a conversation jump between many different frequencies quickly.

Spread spectrum frequency hopping — A method of transmitting radio signals in which a device picks one of 79 channels at random.

If the chosen channel is already in use, the device randomly chooses another channel, and communication pairs constantly change frequencies several times a second. This technique minimizes interference and enhances security in wireless technologies like Bluetooth, like a secret conversation jumping between radio channels.

Students often think frequency hopping is just about avoiding interference, but actually it also contributes to the security of the connection by making it harder to intercept.

Frequency and Wavelength Relationship

Used to calculate the relationship between frequency, wavelength, and the speed of light for electromagnetic radiation.

Various hardware components are crucial for the functioning of both local and wide area networks. These devices manage data flow, connect different network segments, and enable communication between devices, ensuring efficient and reliable network operations.

Hub — Hardware used to connect together a number of devices to form a LAN that directs incoming data packets to all devices on the network (LAN).

Hubs are basic network devices that broadcast all incoming data packets to every connected device, regardless of the intended recipient. This makes them less secure and less efficient than switches, much like a megaphone in a room where everyone hears the message.

Students often think hubs and switches perform the same function, not understanding that hubs broadcast data while switches direct it to specific destinations.

Repeating hubs — Network devices which are a hybrid of hub and repeater unit.

Repeating hubs combine the functionality of a hub, which broadcasts data to all connected devices, with that of a repeater, which boosts the signal. This allows them to extend the reach of a network while still operating at the basic broadcast level of a hub.

Switch — Hardware used to connect together a number of devices to form a LAN that directs incoming data packets to a specific destination address only.

Switches are more intelligent than hubs; they read the MAC address in data packets and forward them only to the intended recipient device. This improves network efficiency and security by reducing unnecessary traffic, much like a smart post office that delivers mail only to the correct recipient.

Students often think switches broadcast data like hubs, but actually switches learn MAC addresses and send data only to the specific destination, making them more efficient.

Repeater — Device used to boost a signal on both wired and wireless networks.

A repeater is a network device that regenerates and retransmits a signal to extend its reach. As signals travel over distance, they can degrade; a repeater amplifies the signal, allowing it to cover longer distances without loss of quality.

Bridge — Device that connects LANs which use the same protocols.

A bridge is a network device that connects two or more local area networks (LANs) that use the same communication protocols. It filters data traffic by forwarding packets only to the segment where the destination device is located, improving network efficiency.

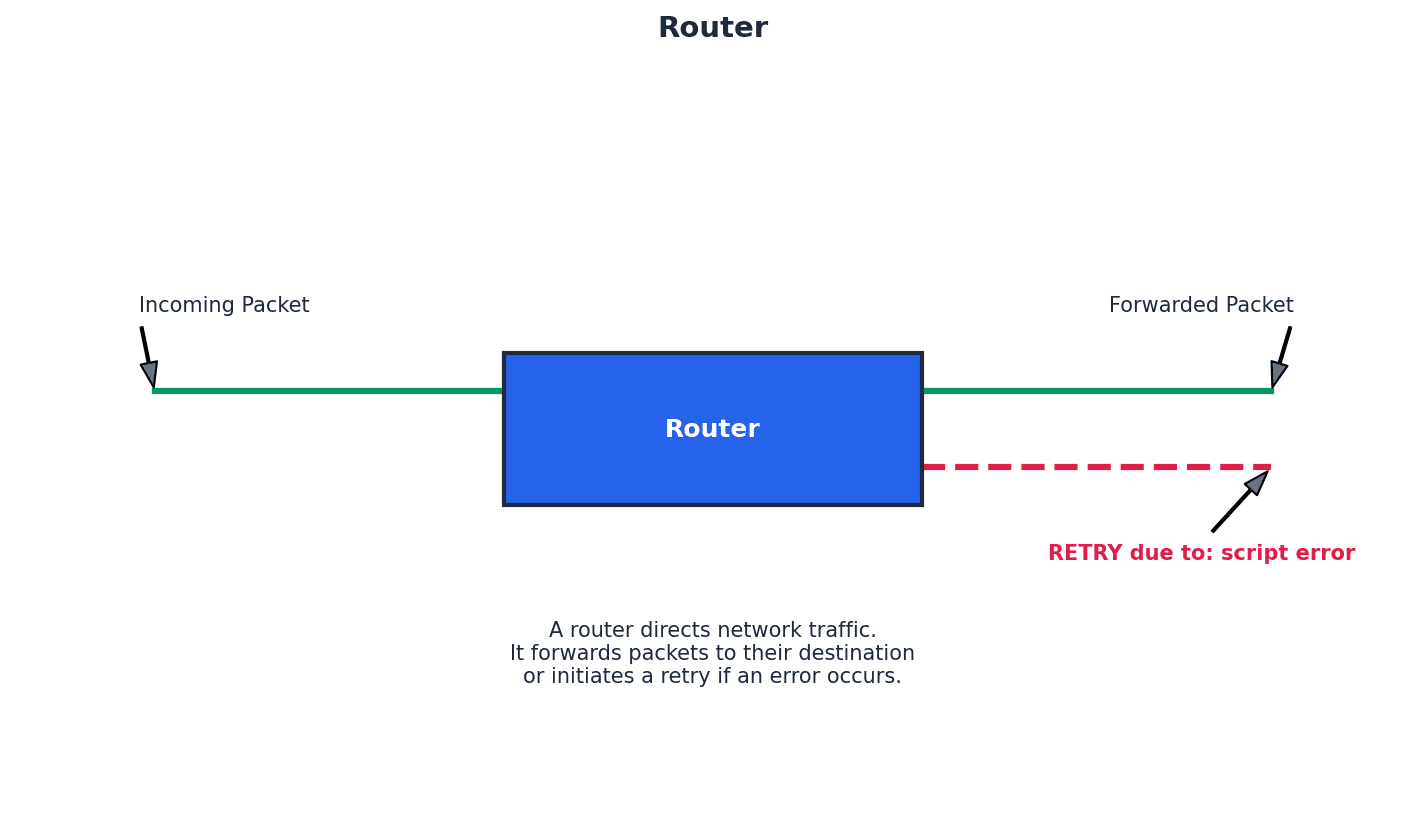

Router — Device which enables data packets to be routed between different networks (for example, can join LANs to form a WAN).

Routers inspect data packets, calculate the best route to a network destination, and can perform protocol translation to allow different networks (like wired and wireless) to communicate. They restrict broadcasts to a LAN and act as a default gateway, much like a traffic controller directing cars to the correct highway.

Students often think a router just connects devices, but actually its primary function is to connect different networks and intelligently route data between them, often performing protocol conversions.

Gateway — Device that connects LANs which use different protocols.

A gateway is a network device that connects two networks that use different communication protocols. It translates data between these incompatible protocols, allowing devices on one network to communicate with devices on another, acting as a bridge between dissimilar systems.

Modem — Modulator demodulator. A device that converts digital data to analogue data (to be sent down a telephone wire); conversely it also converts analogue data to digital data (which a computer can process).

Modems are essential for transmitting digital data from computers over analogue communication channels, such as traditional telephone lines. They modulate digital signals into analogue for transmission and demodulate analogue signals back into digital for reception, acting as a translator between digital and analogue languages.

Students often think a modem is the same as a router, but actually a modem connects to the external network (like the internet service provider's line) by converting signals, while a router creates and manages a local network.

Softmodem — Abbreviation for software modem; a software-based modem that uses minimal hardware.

A softmodem is a software-based modem that relies heavily on the computer's CPU for processing, rather than dedicated hardware. It performs the modulation and demodulation functions through software, reducing hardware costs but potentially increasing CPU usage.

NIC — Network interface card. These cards allow devices to connect to a network/internet (usually associated with a MAC address set at the factory).

A Network Interface Card (NIC) is a hardware component that allows a computer to connect to a network. It provides the physical connection to the network medium and processes network data, typically having a unique MAC address assigned during manufacturing.

WNIC — Wireless network interface cards/controllers. These cards allow devices to connect to a network/internet (usually associated with a MAC address set at the factory).

A Wireless Network Interface Card (WNIC) is a hardware component that enables a computer to connect to a wireless network. It uses radio waves to send and receive data, allowing wireless communication with a WAP or other wireless devices, and also has a unique MAC address.

Clearly distinguish between the functions of different hardware components like routers, switches, and modems, providing their specific roles in network communication.

When data is transmitted across a network, it is broken into packets. Efficient transmission requires protocols to manage how devices access the network medium and handle potential conflicts, such as multiple devices attempting to transmit simultaneously, which can lead to data collisions.

Ethernet — Protocol IEEE 802.3 used by many wired LANs.

Ethernet is a widely used family of computer networking technologies for local area networks (LANs). It defines the physical and data link layers of the network, specifying how data is formatted and transmitted over wired connections, and includes mechanisms for collision detection.

Broadcast — Communication where pieces of data are sent from sender to receiver.

In a broadcast communication, data packets are sent from a single source to all devices on a network segment. This method is used when information needs to reach every node, but it can be inefficient as it generates traffic for devices that are not the intended recipients.

Collision — Situation in which two messages/data from different sources are trying to transmit along the same data channel.

A collision occurs when two or more devices on a shared network medium attempt to transmit data simultaneously, causing the signals to interfere with each other. This corrupts the data and requires retransmission, impacting network performance.

CSMA/CD — Carrier sense multiple access with collision detection – a method used to detect collisions and resolve the issue.

CSMA/CD is a protocol used in Ethernet networks to manage access to the shared transmission medium and handle data collisions. Devices 'listen' to the network before transmitting (carrier sense), and if a collision is detected, they stop transmitting, wait a random amount of time, and then attempt to retransmit.

Students often assume that CSMA/CD prevents collisions entirely, rather than detecting and resolving them after they occur.

Conflict — Situation in which two devices have the same IP address.

An IP address conflict arises when two or more devices on the same network are assigned the identical IP address. This prevents proper network communication for the affected devices, as the network cannot uniquely identify them.

Bit streaming is the continuous transmission of digital data over a network, allowing users to consume multimedia content without fully downloading it first. This technology relies on buffering to ensure smooth playback and can be categorized into real-time and on-demand methods.

Bit streaming — Contiguous sequence of digital bits sent over a network/internet.

Bit streaming refers to the continuous flow of digital data, typically multimedia content, from a server to a client device over a network. This allows users to watch or listen to content as it arrives, without needing to download the entire file first.

Buffering — Store which holds data temporarily.

Buffering is the process of temporarily storing a portion of streaming data in a dedicated memory area (buffer) on the client device before playback. This helps to ensure smooth, uninterrupted playback by providing a reserve of data in case of network fluctuations or temporary slowdowns.

Bit rate — Number of bits per second that can be transmitted over a network.

Bit rate, or data rate, measures the speed at which data is transferred over a communication channel, expressed in bits per second (bps). A higher bit rate generally indicates faster data transmission and, for streaming, can result in higher quality audio or video.

On demand (bit streaming) — System that allows users to stream video or music files from a central server as and when required without having to save the files on their own computer/tablet/phone.

On-demand bit streaming allows users to select and play multimedia content from a server at any time, providing flexibility and control over what they watch or listen to. The content is streamed directly to the device without permanent storage, such as with popular video streaming services.

Real-time (bit streaming) — System in which an event is captured by camera (and microphone) connected to a computer and sent to a server where the data is encoded.

Real-time bit streaming involves the live transmission of events as they happen, such as live sports broadcasts or video conferencing. The data is captured, encoded, and streamed with minimal delay, allowing viewers to experience the event almost instantaneously.

The Internet and the World Wide Web are often confused, but they are distinct concepts. The Internet is the underlying global network infrastructure, while the World Wide Web is a system of interconnected documents and other web resources that are accessed via the Internet.

ARPAnet — Advanced Research Projects Agency Network, an early form of packet switching wide area network.

ARPAnet was one of the earliest forms of networking, developed around 1970 in the USA, connecting large computers in the Department of Defense and later universities. It is widely considered the technical foundation for the modern internet, like the very first, small-scale highway system that proved the concept of interconnected roads.

Students often think ARPAnet is the internet, but actually it was a precursor and the technical platform upon which the internet later developed.

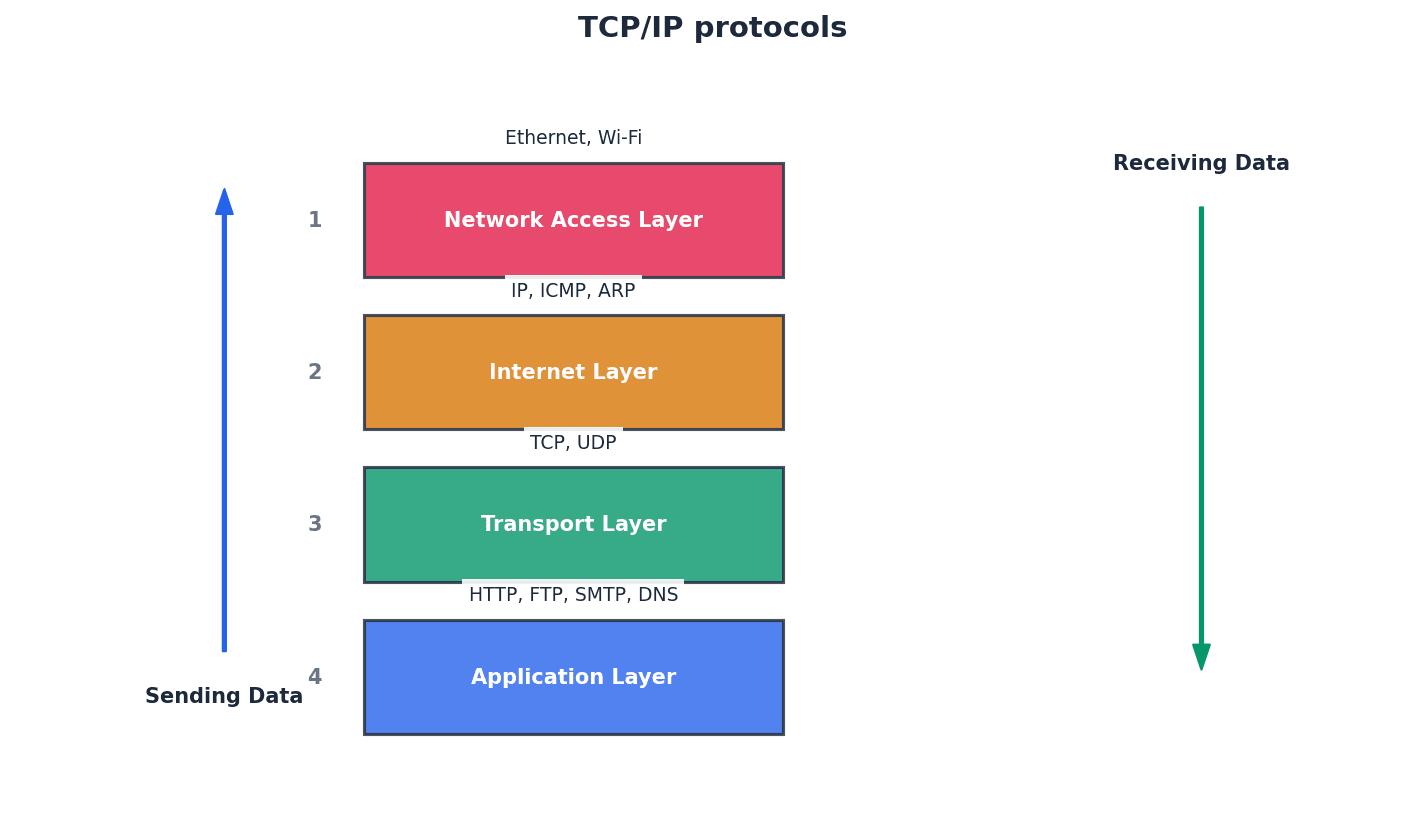

Internet — Massive network of networks, made up of computers and other electronic devices; uses TCP/IP communication protocols.

The Internet is a global system of interconnected computer networks that uses the standard Internet Protocol Suite (TCP/IP) to link billions of devices worldwide. It is the physical infrastructure that allows data to be exchanged globally.

World Wide Web (WWW) — Collection of multimedia web pages stored on a website, which uses the internet to access information from servers and other computers.

The World Wide Web (WWW) is a system of interlinked hypertext documents and other web resources that are accessed via the Internet. It is a service that runs on the Internet, allowing users to navigate through web pages using web browsers.

Students often confuse the internet with the World Wide Web, thinking they are the same thing. Remember that the WWW is a service that runs on the Internet.

Internet service provider (ISP) — Company which allows a user to connect to the internet.

An Internet Service Provider (ISP) is a company that provides individuals and organizations with access to the Internet. ISPs offer various services, including internet access, email, and web hosting, acting as the gateway for users to connect to the global network.

Public switched telephone network (PSTN) — Network used by traditional telephones when making calls or when sending faxes.

The Public Switched Telephone Network (PSTN) is the traditional circuit-switched telephone network that has been used for voice communication for over a century. It is a global network of telephone lines, fiber optic cables, and switching centers that allows users to make phone calls and send faxes.

Voice over Internet Protocol (VoIP) — Converts voice and webcam images into digital packages to be sent over the internet.

Voice over Internet Protocol (VoIP) is a technology that allows voice and multimedia communications to be transmitted over the Internet. It converts analogue audio signals into digital packets, which are then sent over the internet, enabling phone calls and video conferencing without traditional telephone lines.

To navigate the vastness of the Internet, devices require unique identifiers known as IP addresses. These numerical labels allow data packets to be routed to their correct destinations. The Domain Name Service (DNS) then translates human-readable website addresses into these numerical IP addresses.

Internet protocol (IP) — Uses IPv4 or IPv6 to give addresses to devices connected to the internet.

The Internet Protocol (IP) is a set of rules for sending data over the internet. It defines how data packets are addressed and routed from the source to the destination, using either IPv4 or IPv6 addressing schemes to uniquely identify devices.

IPv4 — IP address format which uses 32 bits, such as 200.21.100.6.

IPv4 (Internet Protocol version 4) is the fourth version of the Internet Protocol and uses 32-bit addresses, typically represented as four decimal numbers separated by dots (e.g., 200.21.100.6). It is the most widely used IP addressing system, though its address space is becoming exhausted.

Classless inter-domain routing (CIDR) — Increases IPv4 flexibility by adding a suffix to the IP address, such as 200.21.100.6/18.

Classless Inter-Domain Routing (CIDR) is a method for allocating IP addresses and routing Internet Protocol packets. It improves IPv4 address allocation efficiency by allowing more flexible division of IP address ranges, using a suffix to indicate the network portion of the address.

IPv6 — Newer IP address format which uses 128 bits, such as A8F0:7FFF:F0F1:F000:3DD0:256A:22FF:AA00.

IPv6 (Internet Protocol version 6) is the latest version of the Internet Protocol, designed to address the exhaustion of IPv4 addresses. It uses 128-bit addresses, providing a vastly larger address space and offering improved features for routing and network auto-configuration.

Zero compression — Way of reducing the length of an IPv6 address by replacing groups of zeroes by a double colon (::); this can only be applied once to an address to avoid ambiguity.

Zero compression is a technique used to shorten the written representation of IPv6 addresses by replacing a single contiguous sequence of 16-bit blocks consisting of all zeros with a double colon (::). This can only be applied once per address to maintain uniqueness.

Sub-netting — Practice of dividing networks into two or more sub-networks.

Sub-netting is the process of dividing a larger network into smaller, more manageable sub-networks (subnets). This practice improves network efficiency, security, and organization by reducing broadcast traffic and allowing for more granular control over network segments.

Private IP address — An IP address reserved for internal network use behind a router.

Private IP addresses are specific ranges of IP addresses reserved for use within private networks, such as home or office LANs. They are not routable on the public internet and are used to identify devices internally, providing a layer of security and conserving public IP addresses.

Public IP address — An IP address allocated by the user’s ISP to identify the location of their device on the internet.

A public IP address is a globally unique IP address assigned to a device by an Internet Service Provider (ISP). It is used to identify the device's location on the public internet, allowing it to communicate with other devices and servers worldwide.

Students often assume that all IP addresses are public and globally unique, not understanding the concept of private IP addresses within local networks.

Uniform resource locator (URL) — Specifies location of a web page (for example, www.hoddereducation.co.uk).

A Uniform Resource Locator (URL) is a specific type of Uniform Resource Identifier (URI) that provides a means of locating a resource on the World Wide Web and also describes how to access it. It is the address used to find web pages and other resources on the internet.

Domain name service (DNS) — (Also known as domain name system) gives domain names for internet hosts and is a system for finding IP addresses of a domain name.

The Domain Name Service (DNS) is a hierarchical and decentralized naming system for computers, services, or any resource connected to the Internet or a private network. It translates human-readable domain names (like www.example.com) into numerical IP addresses, which computers use to identify each other.

Web browser — Software that connects to DNS to locate IP addresses; interprets web pages sent to a user’s computer so that documents and multimedia can be read or watched/listened to.

A web browser is application software for accessing the World Wide Web. When a user enters a URL, the browser uses DNS to find the corresponding IP address, then retrieves and renders the web page content, allowing users to view documents and multimedia.

HyperText Mark-up Language (HTML) — Used to design web pages and to write http(s) protocols, for example.

HyperText Markup Language (HTML) is the standard markup language for documents designed to be displayed in a web browser. It defines the structure and content of web pages, using tags to format text, embed images, and create hyperlinks.

JavaScript® — Object-orientated (or scripting) programming language used mainly on the web to enhance HTML pages.

JavaScript is a high-level, often just-in-time compiled, and multi-paradigm programming language. It is primarily used to create interactive and dynamic content on web pages, enhancing the user experience beyond static HTML.

PHP — Hypertext processor; an HTML-embedded scripting language used to write web pages.

PHP (Hypertext Preprocessor) is a popular general-purpose scripting language especially suited to web development. It is often embedded directly into HTML and executed on the server side to generate dynamic web content before it is sent to the client's web browser.

When asked to 'explain' benefits/drawbacks of networking, provide specific examples beyond just stating the point.

When describing LANs, mention shared resources (printers, files) and their limited geographical area as key characteristics.

When defining MANs, emphasize their intermediate geographical size, connecting multiple LANs within a city or campus.

When asked about the origins of the internet, mention ARPAnet as a key early development, focusing on its role in packet switching and connecting institutions.

Clearly state the dual function of modulation and demodulation when defining a modem, and its role in bridging digital and analogue communication mediums.

For this chapter, focus on understanding the 'why' behind different network choices (e.g., why use a star topology over a bus, or client-server over peer-to-peer) and be able to articulate the trade-offs in terms of performance, security, and cost.

Definitions Bank

ARPAnet

Advanced Research Projects Agency Network, an early form of packet switching wide area network.

WAN

Wide area network, a network covering a very large geographical area.

LAN

Local area network, a network covering a small area such as a single building.

MAN

Metropolitan area network, a network which is larger than a LAN but smaller than a WAN, which can cover several buildings in a single city, such as a university campus.

File server

A server on a network where central files and other data are stored.

+63 more definitions

View all →Common Mistakes

Students often confuse the internet with the World Wide Web.

The Internet is the global network infrastructure, while the World Wide Web is a collection of interconnected documents and resources accessed via the Internet.

Students often think hubs and switches perform the same function.

Hubs broadcast data to all devices on a LAN, whereas switches direct data to specific destination addresses, improving efficiency and security.

Students often believe that data redundancy is wasteful.

Data redundancy is a crucial strategy for ensuring data availability and disaster recovery by storing multiple copies of data on different servers.

+3 more

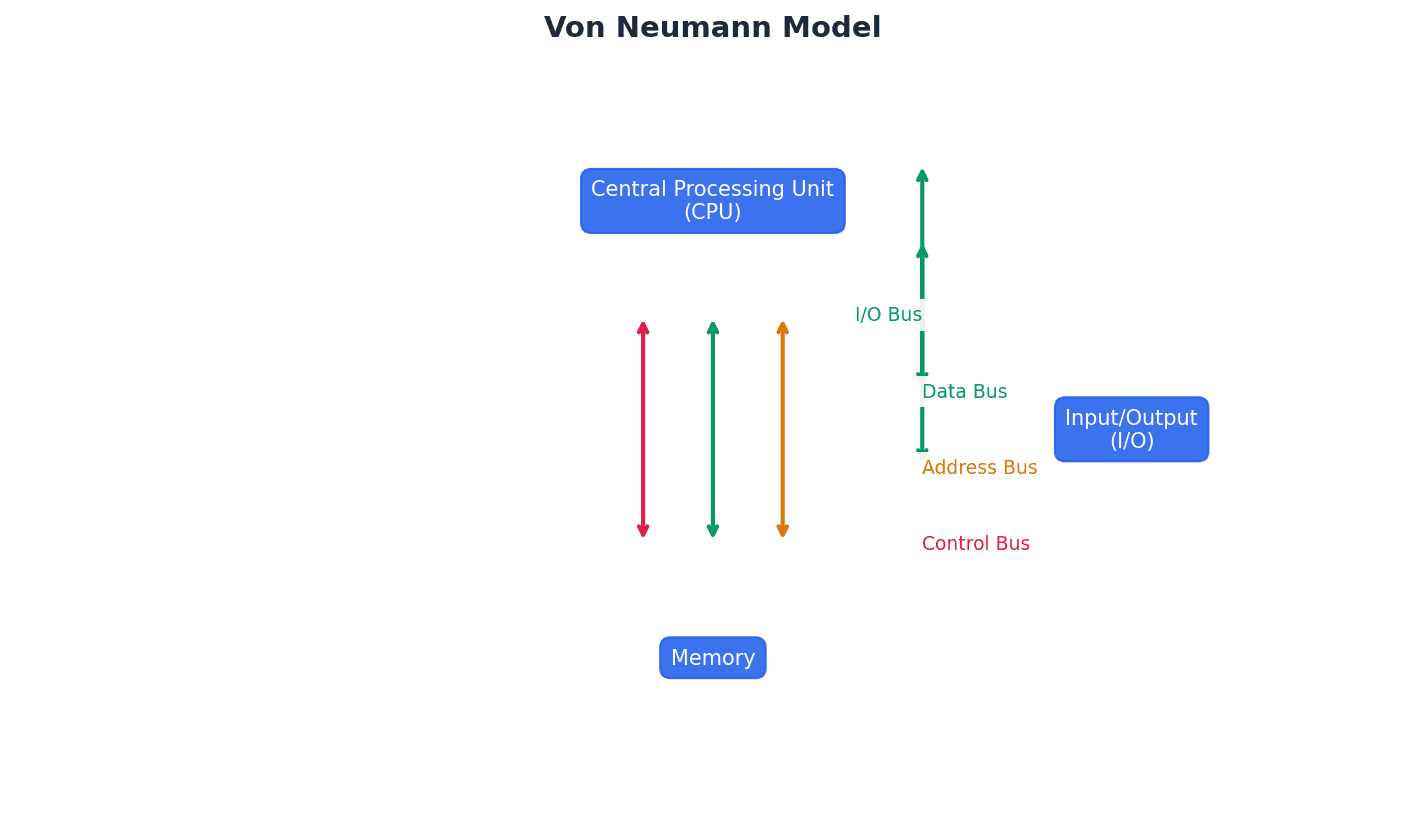

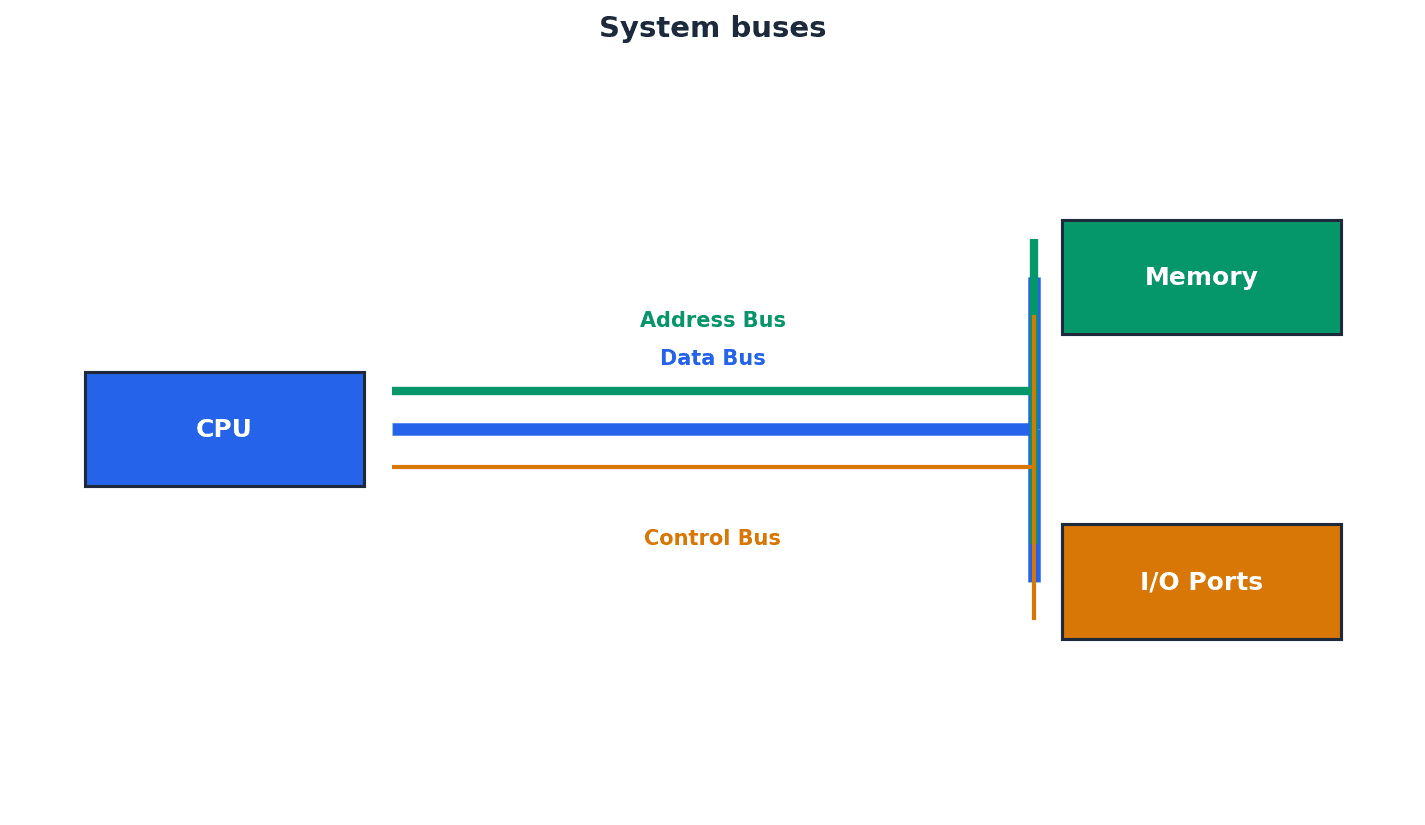

View all →This chapter provides a comprehensive overview of computer hardware, covering primary and secondary storage, various input/output devices, and the fundamental principles of logic gates and circuits. It explains the characteristics and applications of different memory types and storage technologies, alongside the operation of monitoring and control systems.

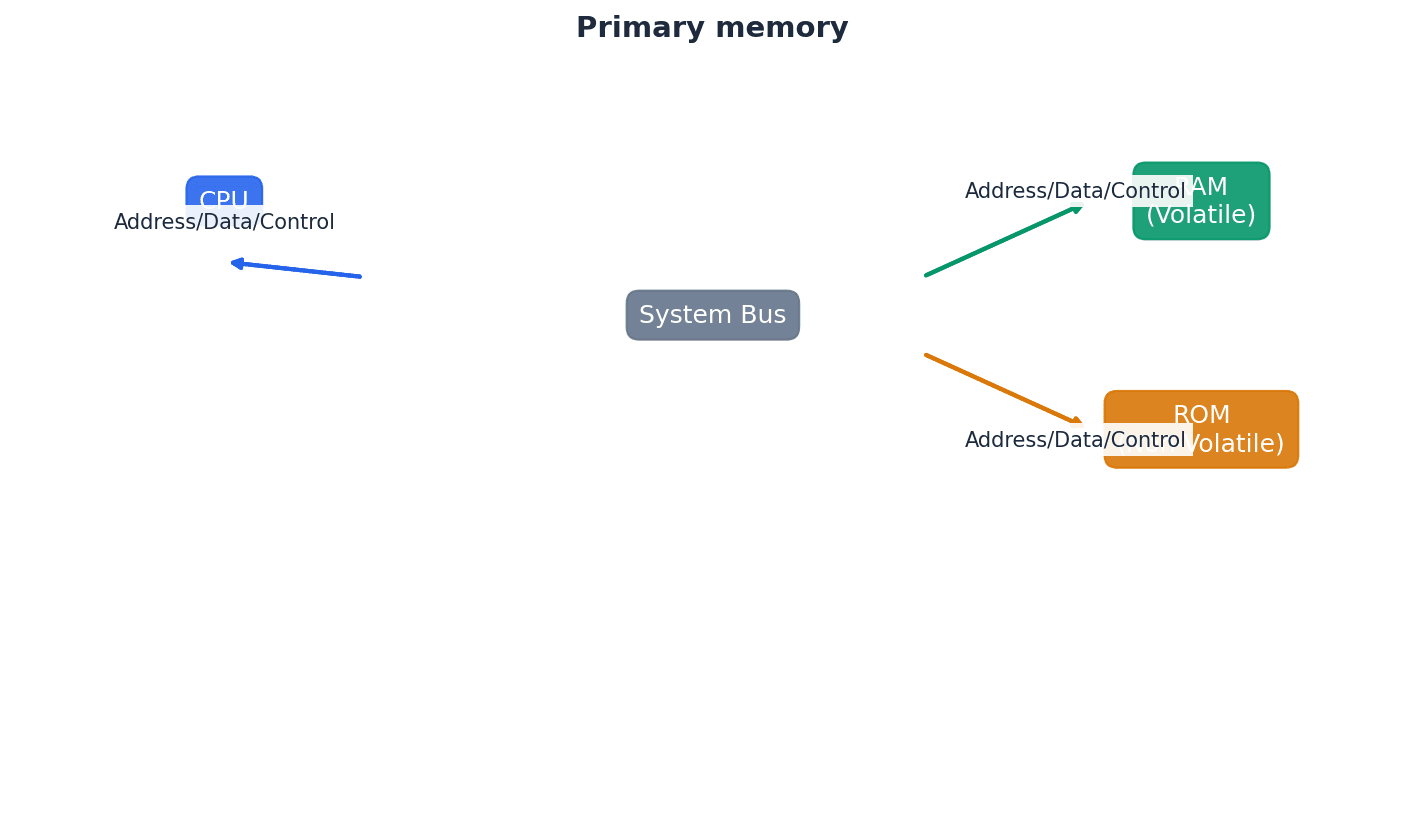

Random access memory (RAM) — Primary memory unit that can be written to and read from.

RAM is volatile memory used by the computer to temporarily store data, files, and parts of the operating system or applications currently in use. Its contents are lost when the computer is powered off, much like a desk workspace that is cleared at the end of the day.

Students often confuse RAM as permanent storage; it is volatile and temporary. Remember that RAM is temporary storage for active programs and data; permanent storage is handled by secondary devices like HDDs or SSDs.

Read-only memory (ROM) — Primary memory unit that can only be read from.

ROM is non-volatile memory, meaning its contents are retained even when the power is off. It typically stores essential data and instructions, such as the BIOS, that the computer needs to access during startup and cannot be altered by the user, similar to an unchangeable instruction manual for basic computer functions.

Students often believe ROM is completely unchangeable; some types (EPROM, EEPROM) can be reprogrammed under specific conditions. Remember that while ROM is generally read-only, specific types can be reprogrammed, though not during normal operation.

Memory cache — High speed memory external to the processor which stores data which the processor will need again.

Memory cache acts as a buffer between the CPU and main memory (RAM), storing frequently accessed data and instructions. This allows the processor to retrieve them much faster than from main memory, improving overall system performance, much like a chef's easily accessible spice rack next to their main pantry.

When asked to 'explain the purpose of cache memory', focus on its role in speeding up data access for the CPU by storing frequently used data/instructions, reducing reliance on slower main memory.

Primary memory, directly accessible by the CPU, includes both RAM and ROM, each serving distinct purposes. RAM is volatile, meaning its contents are lost when power is removed, and is used for active data and programs. In contrast, ROM is non-volatile, retaining its data without power, and stores critical boot-up instructions like the BIOS.

Dynamic RAM (DRAM) — Type of RAM chip that needs to be constantly refreshed.

DRAM stores bits in capacitors and transistors, requiring constant refreshing (recharging) every few microseconds to prevent the charge from leaking away and losing data. It is less expensive and has higher memory capacity than SRAM, making it common for main memory, similar to a leaky bucket needing continuous refilling.

Refreshed — Requirement to charge a component to retain its electronic state.

In the context of DRAM, refreshing means periodically recharging the capacitors that store data bits. Without this constant recharging, the electrical charge would dissipate, leading to data loss, much like a battery that slowly loses its charge and needs regular plugging in.

Students often mix up the refresh requirement of DRAM with data modification; refreshing maintains the electrical state, not changes data. Remember that refreshing in DRAM means recharging the capacitors to maintain their electronic state, not necessarily changing the stored data.

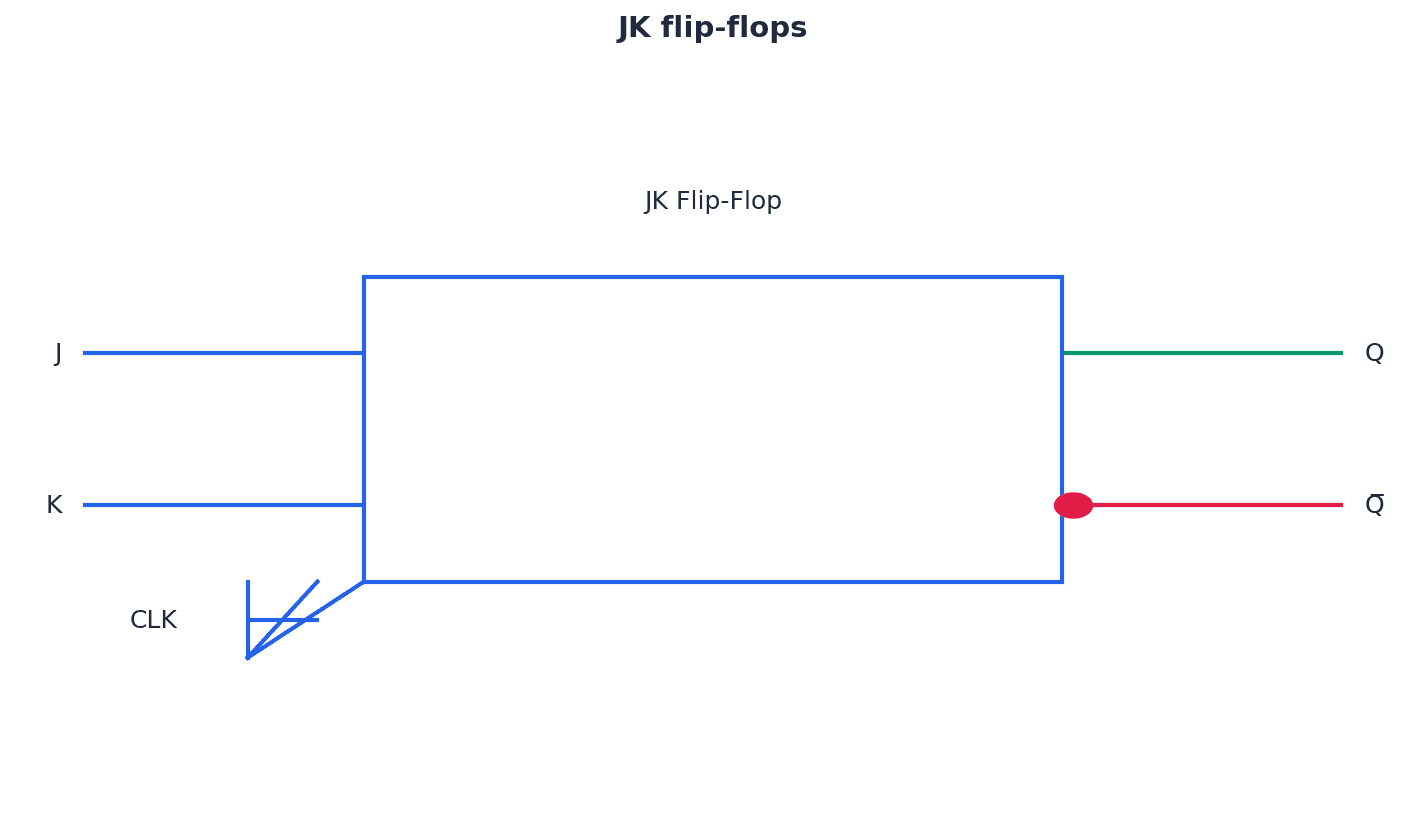

Static RAM (SRAM) — Type of RAM chip that uses flip-flops and does not need refreshing.

SRAM stores bits using flip-flops, which are more complex circuits than DRAM's capacitors and transistors, but do not require constant refreshing. This makes SRAM much faster than DRAM and suitable for applications where speed is critical, such as processor memory cache, like a light switch that stays in its position without continuous power.

When asked about DRAM, mention the use of capacitors and transistors, and the critical need for constant refreshing. Contrast it with SRAM's lack of refresh requirement.

Programmable ROM (PROM) — Type of ROM chip that can be programmed once.

PROM is initially blank and can be programmed by 'burning' fuses in a matrix using a PROM writer and an electric current. Once programmed, its contents are permanent and cannot be erased or rewritten, making it suitable for mobile phones and RFID tags, much like a blank CD-R that can be recorded onto only once.

Erasable PROM (EPROM) — Type of ROM that can be programmed more than once using ultraviolet (UV) light.

EPROM uses floating gate transistors and capacitors, allowing it to be programmed, erased by exposure to strong UV light through a quartz window, and then reprogrammed. This makes it useful for applications under development, such as new games consoles, where firmware might need updates, similar to a whiteboard that can be written on, erased with a special cleaner, and then written on again.

Electronically erasable programmable read-only memory (EEPROM) — Read-only (ROM) chip that can be modified by the user, which can then be erased and written to repeatedly using pulsed voltages.

EEPROM is a type of non-volatile memory that can be electrically erased and reprogrammed byte-by-byte, unlike EPROM which requires UV light and erases the entire chip. This makes it more flexible for in-system updates, though slower than RAM, much like a digital notepad where individual notes can be erased and rewritten electronically.

Flash memory — A type of EEPROM, particularly suited to use in drives such as SSDs, memory cards and memory sticks.

Flash memory is a non-volatile storage technology that uses NAND or NOR gate-based EEPROM cells. It allows data to be written and erased in blocks (NAND) or bytes (NOR), offering high density and speed suitable for SSDs, USB drives, and memory cards, similar to a digital photo album where pages or individual photos can be quickly added or deleted.

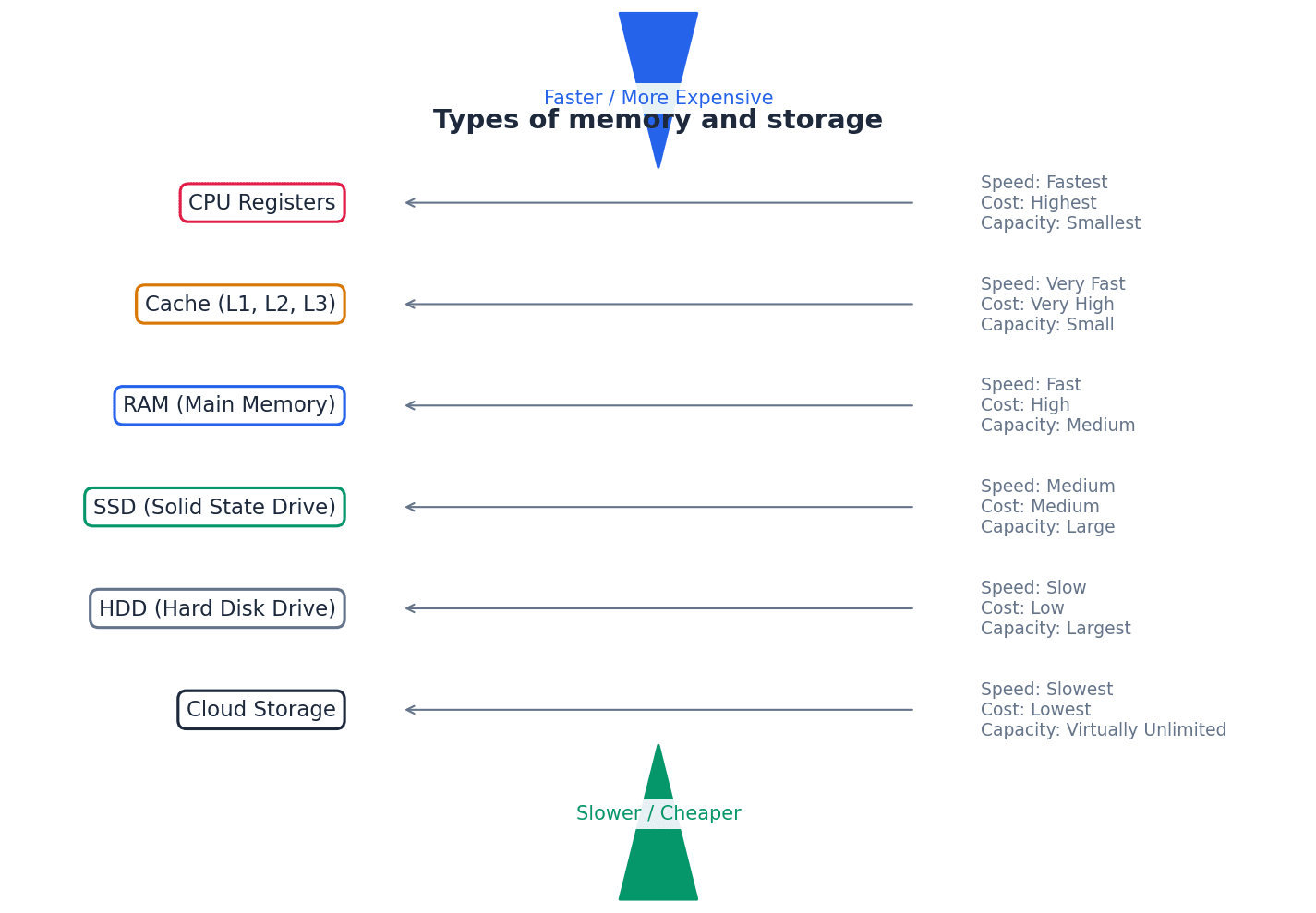

Secondary storage devices provide non-volatile, long-term data storage, complementing the temporary nature of primary memory. These include magnetic storage like Hard Disk Drives (HDDs), solid-state storage like Solid State Drives (SSDs) and flash memory, and optical media such as CDs, DVDs, and Blu-ray discs. Each technology offers different trade-offs in terms of speed, capacity, durability, and cost.

Hard disk drive (HDD) — Type of magnetic storage device that uses spinning disks.

HDDs store data digitally on magnetic surfaces of rapidly spinning platters, accessed by read-write heads. Data is organized into tracks and sectors, but latency and fragmentation can slow down data access over time, much like a record player where the needle moves across spinning records to find music.

Latency — The lag in a system; for example, the time to find a track on a hard disk, which depends on the time taken for the disk to rotate around to its read-write head.

In HDDs, latency is the delay experienced while the desired sector of data rotates into position under the read-write head after the head has moved to the correct track. High latency contributes to slower data access times, similar to the time it takes for a specific book on a rotating bookshelf to spin around to you.

Students often confuse latency with data transfer rate; latency is delay before transfer, transfer rate is speed during transfer. Remember that latency is the delay before data transfer begins, while data transfer rate is how quickly data moves once the transfer starts.

Fragmented — Storage of data in non-consecutive sectors; for example, due to editing and deletion of old data.

Fragmentation occurs on HDDs when files are stored in scattered, non-adjacent sectors across the disk. This happens over time as files are created, deleted, and modified, forcing the read-write heads to move more, increasing access time, much like reading a book where chapters are scattered across different pages.

Removable hard disk drive — Portable hard disk drive that is external to the computer; it can be connected via a USB port when required; often used as a device to back up files and data.

These are self-contained HDDs designed for portability and external connection, typically via USB. They offer large storage capacity for backups or transferring large files between computers, providing flexibility not found in internal drives, like a portable safe deposit box for digital files.

Solid state drive (SSD) — Storage media with no moving parts that relies on movement of electrons.

SSDs use flash memory (NAND or NOR chips) to store data, controlling the movement of electrons to represent 0s and 1s. Lacking moving parts, they offer faster data access, lower power consumption, greater durability, and are lighter and thinner than HDDs, similar to a digital book where all pages are instantly accessible.

Optical storage — CDs, DVDs and Blu-rayTM discs that use laser light to read and write data.

Optical storage media store data as microscopic pits and bumps on a spiral track, which are read and written using laser light. Different types (CD, DVD, Blu-ray) use lasers of varying wavelengths to achieve different storage capacities, much like a musical score etched onto a disc that a laser 'reads'.

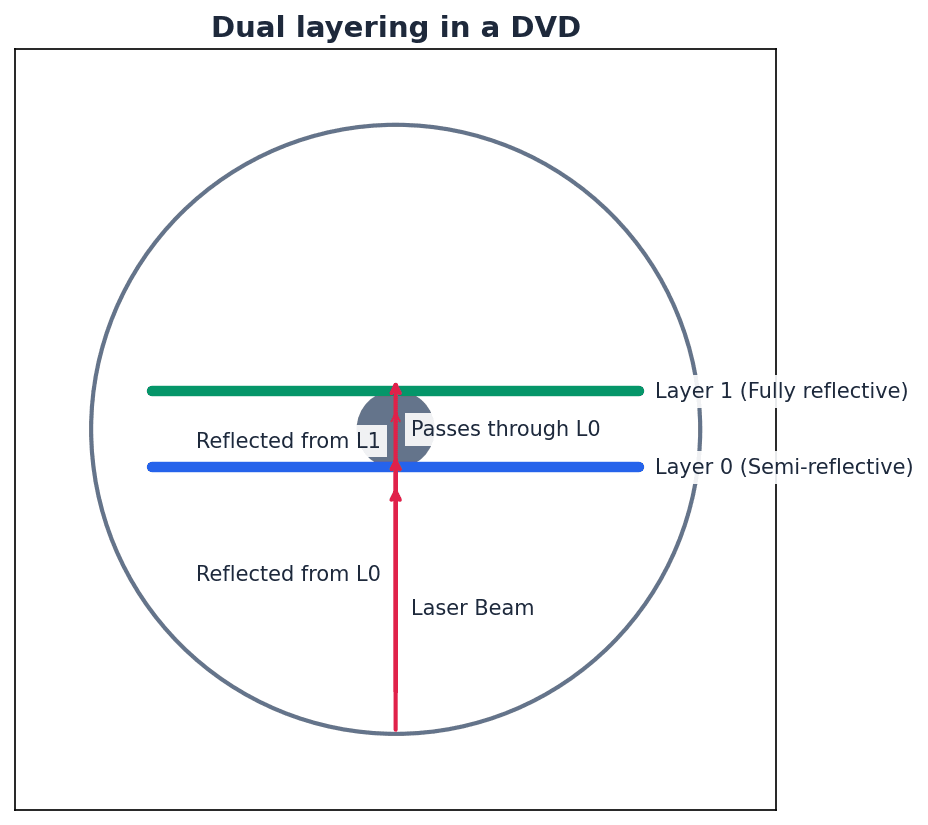

Dual layering — Used in DVDs; uses two recording layers.

Dual layering in DVDs involves two separate recording layers joined together, with a thin reflector between them. A laser can focus at different depths to read or write data on either layer, significantly increasing the disc's storage capacity compared to single-layer discs, like having two sheets of paper glued together to write on both sides.

Birefringence — A reading problem with DVDs caused by refraction of laser light into two beams.

Birefringence is an issue in dual-layered DVDs where the laser light, passing through the polycarbonate layers, refracts into two separate beams. This can lead to reading errors as the laser struggles to accurately interpret the data, similar to trying to read a book through a wavy, imperfect window.

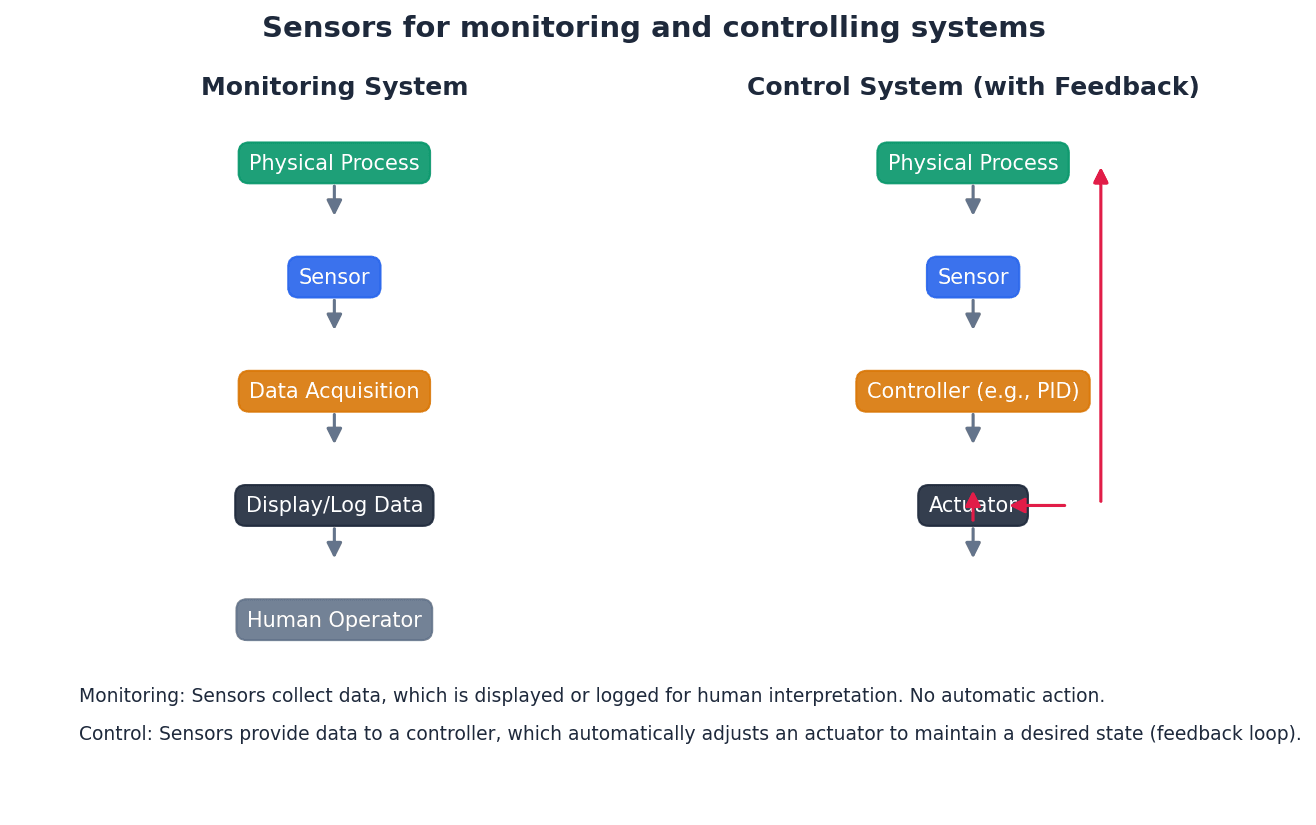

Computers interact with the world through various input and output devices. Input devices, such as sensors and microphones, capture data from the environment or user. Output devices, like printers and screens, present information or perform actions based on computer processing. Many of these devices require conversion between analogue and digital signals.

Binder 3D printing — 3D printing method that uses a two-stage pass; the first stage uses dry powder and the second stage uses a binding agent.

In binder 3D printing, each layer is formed in two steps: first, a layer of dry powder is spread, and then a print head sprays a liquid binding agent onto specific areas, solidifying the powder to create the desired cross-section of the object, much like building a sandcastle by laying dry sand and then spraying water to make it stick.

Students often assume all 3D printing involves melting material; binder 3D printing uses powder and a binding agent. Remember that binder 3D printing uses a liquid binder to solidify powder, which is a different mechanism from extrusion or laser sintering.

Direct 3D printing — 3D printing technique where print head moves in the x, y and z directions. Layers of melted material are built up using nozzles like an inkjet printer.

Direct 3D printing builds objects layer by layer by extruding melted material (like plastic or resin) through a nozzle, similar to how an inkjet printer sprays ink. The print head moves in three dimensions to create the object's shape, much like building a sculpture by squeezing out thin lines of soft clay from a tube.

Digital to analogue converter (DAC) — Needed to convert digital data into electric currents that can drive motors, actuators and relays, for example.

A DAC transforms discrete digital signals (binary values) into continuous analogue signals (varying electric currents or voltages). This is essential for output devices like speakers, motors, and actuators that operate on analogue inputs, acting as a translator from numbers to a smooth melody.

Students often think digital signals can directly control all physical devices; many require analogue signals, necessitating a DAC. Remember that many real-world devices (like motors or speakers) require continuous analogue signals, necessitating a DAC.

Analogue to digital converter (ADC) — Needed to convert analogue data (read from sensors, for example) into a form understood by a computer.

An ADC transforms continuous analogue signals (like temperature, pressure, or sound from sensors) into discrete digital values (binary data) that a computer can process, store, and manipulate. It's like a device that takes a continuous measurement, such as water height, and converts it into a specific number a computer can understand.

Organic LED (OLED) — Uses movement of electrons between cathode and anode to produce an on-screen image. It generates its own light so no back lighting required.

OLED technology uses organic materials that emit light when an electric current passes through them, moving electrons between a cathode and an anode. Because each pixel generates its own light, OLED screens do not require backlighting, allowing for thinner displays, true blacks, and high contrast, much like tiny, individual light bulbs that can turn on and off independently.

Screen resolution — Number of pixels in the horizontal and vertical directions on a television/computer screen.

Screen resolution defines the clarity and detail of an image by specifying the total number of pixels displayed horizontally and vertically (e.g., 1920 × 1080). Higher resolution means more pixels, resulting in sharper images and more on-screen content, similar to a mosaic made of tiny tiles where more tiles mean a more detailed picture.

Touch screen — Screen on which the touch of a finger or stylus allows selection or manipulation of a screen image; they usually use capacitive or resistive technology.

Touch screens integrate input and output functions, allowing users to interact directly with the display using touch. They typically employ either capacitive or resistive technology to detect the location of a touch, much like a digital whiteboard where you can draw or select things directly with your finger.

Capacitive — Type of touch screen technology based on glass layers forming a capacitor, where fingers touching the screen cause a change in the electric field.

Capacitive touch screens consist of multiple glass layers that create an electric field. When a conductive object, like a bare finger, touches the screen, it draws a small amount of current, causing a change in the electric field that a microprocessor detects to determine the touch coordinates, similar to a grid of invisible electric wires where a finger draws a tiny spark.

Resistive — Type of touch screen technology. When a finger touches the screen, the glass layer touches the plastic layer, completing the circuit and causing a current to flow at that point.

Resistive touch screens have two flexible layers, typically polyester and glass, separated by a small gap. When pressure is applied, the layers make contact, completing an electrical circuit at that point, and the change in resistance is measured to determine the touch location, like two sheets of conductive paper touching to complete a circuit.

Virtual reality headset — Apparatus worn on the head that covers the eyes like a pair of goggles. It gives the user the ‘feeling of being there’ by immersing them totally in the virtual reality experience.

VR headsets provide an immersive virtual experience by displaying separate video feeds to each eye, often with lenses to create a 3D effect. Sensors track head movements, allowing the virtual environment to react dynamically, enhancing the sense of presence, much like high-tech goggles that transport you into a different world.

Sensor — Input device that reads physical data from its surroundings.

Sensors are devices that detect and measure physical quantities from the environment, such as temperature, pressure, light, or sound. They convert these analogue physical properties into electrical signals, which are then typically converted to digital data by an ADC for computer processing, acting as the 'eyes' or 'ears' of a computer system.

Monitoring and control systems rely heavily on sensors to gather data from the physical world. This analogue data is then converted into a digital format by an Analogue-to-Digital Converter (ADC) for processing by a computer. The computer can then make decisions and, if necessary, send digital signals to output devices, which are converted back to analogue signals by a Digital-to-Analogue Converter (DAC) to control actuators or motors.

Logic gates — Electronic circuits which rely on ‘on/off’ logic. The most common ones are NOT, AND, OR, NAND, NOR and XOR.

Logic gates are fundamental building blocks of digital circuits, taking one or more binary inputs (0 or 1) and producing a single binary output based on a specific Boolean function. They are used to implement decision-making processes in computer hardware, like tiny switches following simple rules.

Logic circuit — Formed from a combination of logic gates and designed to carry out a particular task. The output from a logic circuit will be 0 or 1.

Truth table — A method of checking the output from a logic circuit. They use all the possible binary input combinations depending on the number of inputs; for example, two inputs have 22 (4) possible binary combinations, three inputs will have 23 (8) possible binary combinations, and so on.

Boolean algebra — A form of algebra linked to logic circuits and based on TRUE and FALSE.

Number of binary combinations for truth table

Used to determine the number of rows in a truth table, where 'n' is the number of inputs.

NOT A (Boolean Algebra)

This Boolean expression represents the NOT operation, where the output is the inverse of the single input. Also written as X = NOT A.

A AND B (Boolean Algebra)

This Boolean expression represents the AND operation, where the output is 1 only if both inputs are 1. Also written as X = A AND B or A ∧ B.

A OR B (Boolean Algebra)

This Boolean expression represents the OR operation, where the output is 1 if at least one input is 1. Also written as X = A OR B or A ∨ B.

A NAND B (Boolean Algebra)

This Boolean expression represents the NAND operation, which is the inverse of the AND operation. Also written as X = A NAND B.

A NOR B (Boolean Algebra)

This Boolean expression represents the NOR operation, which is the inverse of the OR operation. Also written as X = A NOR B.

A XOR B (Boolean Algebra - expanded form 1)

This Boolean expression represents the XOR operation, where the output is 1 if inputs are different. Also written as X = A XOR B.

A XOR B (Boolean Algebra - expanded form 2)

This is an alternative Boolean expression for the XOR operation, where the output is 1 if inputs are different. Also written as X = A XOR B.

Logic circuits are built by combining various logic gates (NOT, AND, OR, NAND, NOR, XOR) to perform specific tasks. To understand and verify the behavior of a logic circuit, a truth table is constructed. This table systematically lists all possible binary input combinations and their corresponding output, ensuring all 2^n combinations are covered for 'n' inputs.

Students often forget to include all 2^n input combinations when constructing truth tables for 'n' inputs. Always ensure your truth table covers every single possible binary input combination.

Be able to draw the symbols, write the Boolean expressions, and construct truth tables for all six common logic gates. Pay attention to the specific conditions for a '1' output for each gate.

Practice constructing logic circuits and their truth tables from given logic expressions or problem descriptions, ensuring correct gate symbols and connections.

Clearly label inputs and outputs on logic circuits and truth tables to avoid ambiguity and ensure full marks.

Understand the role of ADCs and DACs in monitoring and control systems, explaining when and why each is necessary.

When asked to 'describe' hardware devices, explain their function, how they work, and their typical applications. For memory types, be prepared to differentiate between them based on volatility, speed, cost, and refresh requirements. When evaluating embedded systems, discuss both their benefits (e.g., efficiency, reliability) and drawbacks (e.g., limited functionality, difficulty updating).

Definitions Bank

Memory cache

High speed memory external to the processor which stores data which the processor will need again.

Random access memory (RAM)

Primary memory unit that can be written to and read from.

Read-only memory (ROM)

Primary memory unit that can only be read from.

Dynamic RAM (DRAM)

Type of RAM chip that needs to be constantly refreshed.

Static RAM (SRAM)

Type of RAM chip that uses flip-flops and does not need refreshing.

+28 more definitions

View all →Common Mistakes

Confusing RAM as permanent storage.

RAM is volatile and temporary, used for active data and programs. Permanent storage is handled by secondary devices.

Believing ROM is completely unchangeable.

Some types of ROM (EPROM, EEPROM) can be reprogrammed under specific conditions, though not during normal operation.

Mixing up the refresh requirement of DRAM with data modification.